In part one of this series, we established the motivation behind Einstein Behavior Score (EBS), a powerful tool for marketing and sales teams in enterprises and small businesses alike. We described the evolution in our modeling approaches and the challenges that motivated us to find effective solutions that worked for all of our customers.

In this section, we will describe our insights generation framework and share our journey on model explainability. We will then show you how we generate scores and insights for all customers through a model tournament, so that enterprises and small businesses alike can benefit from our machine learning products. Finally, we’ll describe the architecture that powers all of this and our monitoring framework to oversee the performance of our models in production.

Insights

Insights explain why the EBS score is what it is, build trust in the score, and add more context that might be relevant to the marketer when he or she tries to decide on the action they are going to pursue with that prospect.

Today, EBS customers can see record level insights on each prospect (lead/contact) showing them the top positive factors and negative factors impacting their score. In the upcoming release in Spring 2020, customers will also be able to view the primary factors driving the scores across all prospects, to see broad business trends through the EBS Global Factors dashboard in the Pardot B2BMA app.

As with our models, our approach to generating insights has also evolved with experience. When we started out building Einstein for Sales and Marketing, our models were simple linear models. You could think of these as akin to a subject matter expert assigning weights to various activities that constitute EBS, except these weights are learned by a machine learning algorithm. Explaining the scores, in this case, is as simple as surfacing the activities with the highest positive and negative weights. These models are simple and intuitive, marketers can understand how their actions may impact the score of their prospects.

The challenge with such simple models, however, is that sometimes their accuracy is limited. Often, the signals may interact with each other quite strongly and that could determine whether a prospect will convert. Such interactions cannot be captured by our simpler models.

For example, let’s take two prospects browsing your website. One of them first visited your website just a couple of days ago but has been highly engaged, while the other first visited your website about a month ago. She has shown some engagement in the past but her levels of engagement have peaked only recently. It could so happen that the prospect who has been around longer has already done the due diligence and is now looking for more information as they are getting ready to buy, while the prospect who just stumbled on your website may need more time to digest the information about your product or service offerings. Thus, even though both prospects have the same levels of engagement, we could expect the older prospect to be more ready to buy.

We could build a more complex model like a RandomForest or a Gradient Boosted Decision Trees classifier to automatically capture such interactions, resulting in a more accurate model. However, explaining the predictions from some of these more complex models could be harder. In our journey in building Einstein for Sales and Marketing, we explored a variety of techniques to ensure we don’t have to sacrifice model accuracy for interpretability and vice versa. The first approach is in using a simple surrogate model to approximate the predictions from a more complex model as shown below. There are variations of this approach that can provide local insights using techniques such as LIME.

While this approach does not compromise accuracy for interpretability, it imposes some engineering inconveniences. Instead of training and maintaining one model, we need to maintain two. The two models need to be in sync, investigating customer support cases relating to counter-intuitive scores and insights for one or more prospects will require double the amount of effort that we would need with a single model. Thus, we explored an alternative technique called SHAP, where in a single model is used to generate both scores and insights without compromising accuracy for interpretability. SHAP uses any pre-trained complex model (e.g. XGBoost) as a black box and generates so called SHAP values, which are additive feature attributions for the score, i.e. the behavior score is broken down into the sum of contributions of every feature to the score. SHAP is a relatively new evolution of the concept of SHAP values from game theory, and it’s essentially a collection of computational tricks that make it feasible to calculate SHAP values on large data sets with multiple features. The intuition behind the essence of SHAP is explained below:

We train a complex model like Gradient Booster Decision Trees or a RandomForest on our modeling dataset like before. Now if we need to understand the factors influencing the score for a new lead with ID 2734, we run it through our learned model and record its score. Let’s say it is 88. If we need to understand if the title of the lead is more influential than, let us say, the annual revenue of the company associated with the lead, we could do the following:

- Measure the prediction from the model in the absence of the title field, let’s say it is 35.

- Measure the prediction from the model in the absence of the annual revenue field, let’s say it is 72.

We can infer from the above that title is more influential than annual revenue for this lead because its score dropped by 53 points when the model did not have the knowledge of the title field compared to only a 16 point drop when the model did not have knowledge of the annual revenue field. In both cases, given that the original score dropped, we can say that both these factors positively influence the outcome for this lead. SHAP computes these influence scores in an unbiased and efficient fashion, while accounting for the order in which the various features are presented to the model. For a deeper dive on SHAP, we refer interested readers to Lundberg et. al, the blog post by Gabriel Tseng and the paper on Shapley sampling values by Strumbelj et. al.

We generate local insights in EBS by calculating SHAP values for every scored prospect, sorting them and then picking the features with the highest positive SHAP values to generate positive insights and those with the highest negative SHAP values to generate negative insights. In essence, features with positive SHAP values push up the behavior score and those with negative SHAP values drive down the behavior score as shown below:

Using SHAP not only can we generate prospect level insights, we can also roll them up to generate global influence of signals across all prospects. In EBS today, for those customers for whom we could train a supervised model, the scores and insights are powered by SHAP. In Spring 2020 release, we will also be releasing a SHAP powered global factors dashboard embedded in the B2BMA app on Einstein Analytics, a glimpse of which is shown below.

Scores and insights for all customers: Model Tournament

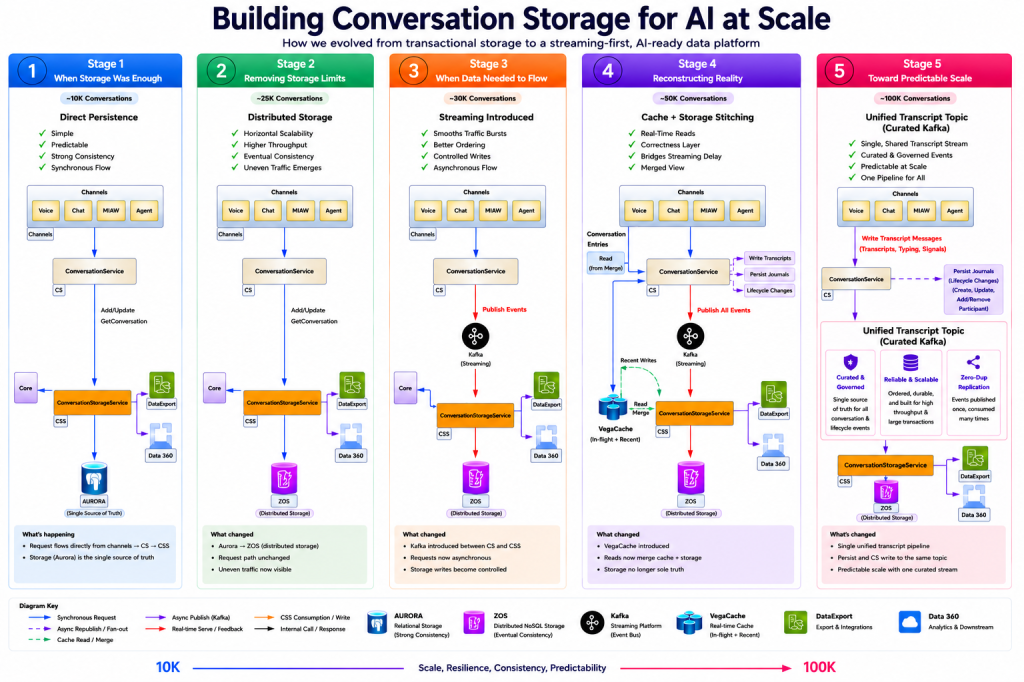

We strive to generate high quality scores for all customers, no matter how small or large they may be and how much historical data they may have. In the model tournament, we have a baseline model built from a minimal list of signals that we expect all customers to have, for customers with a richer history, we build enhanced models using enriched signals. We apply a variety of algorithms to train models on the baseline and enhanced signals and pick the winning model based on its accuracy. The customers see scores and insights from the winning model. This is illustrated in the figure below.

Let’s look at an example of this in action. Our first customer, Acme Corp, has a moderately long history of prospects linking to opportunities. With about 14-months of historical data and 3.5% conversion rate, a simpler model such as Logistic Regression, was the winner with an AUC of 0.78. Our next customer, Initech, a relatively young company, had only about 6-months of historical data of prospects linking to opportunities. Given the short history and the even smaller conversion rate (0.5%), the unsupervised model performed best for this customer. For Hooli Inc, however, we had a rich history of over 28 months of prospect engagement and prospects linking to opportunity events, with a conversion ratio of 5.5%. A more powerful model like XGBoost was the winner in the model tournament, with an AUC of 0.82.

Thus, each of these three customers got the scores and insights from the best performing model for the amount of data they had, they did not have to wait for many months before they could become ready to leverage Einstein Behavior Score.

Architecture Overview

Training predictive models, detecting engagement patterns, and generating scores and insights at scale is a challenging engineering problem. Thankfully, we can harness the power of the Einstein Platform to get this job done. The Einstein Platform is the trusted, secure, scalable and battle-tested machine learning platform that is at the heart of many sales-related Einstein features — including Einstein Opportunity and Lead Scoring, Einstein Opportunity and Account Insights, and Einstein Predictive Forecasting.

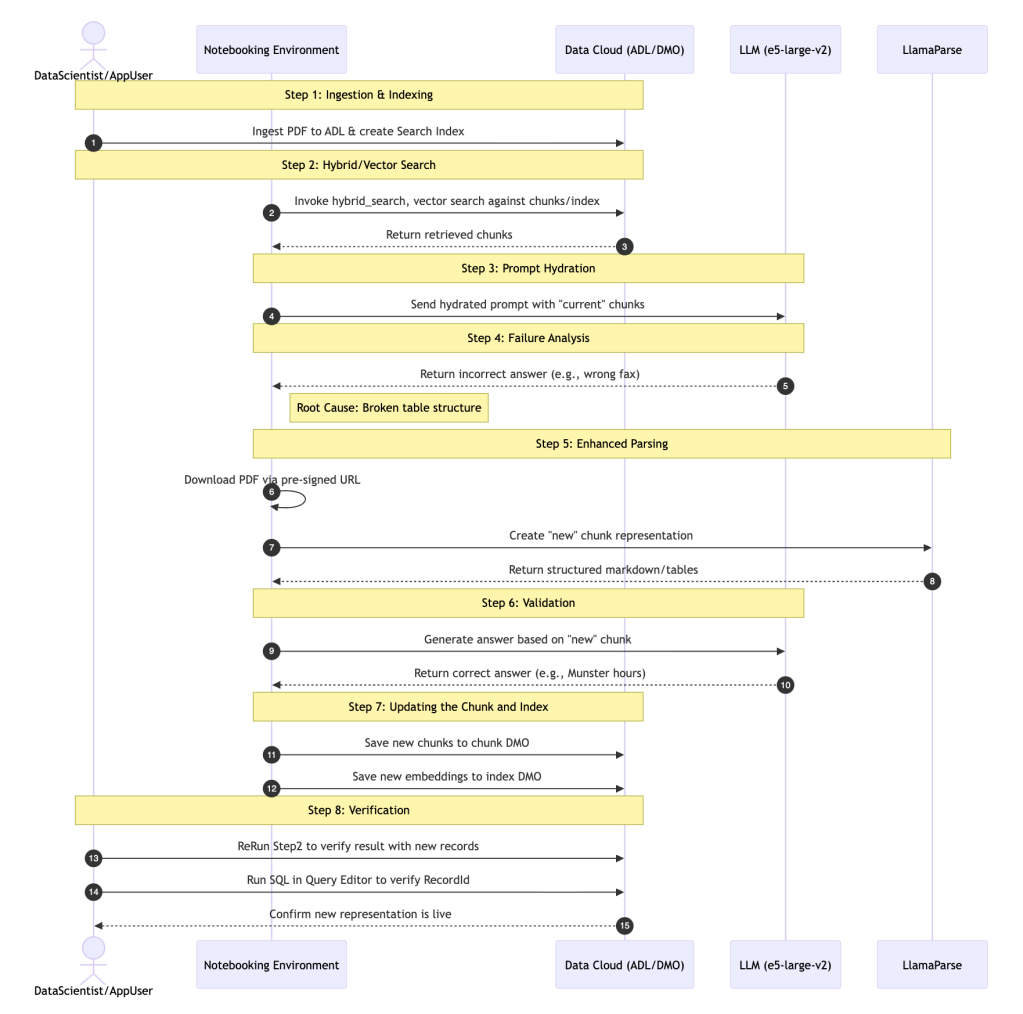

Within Einstein Platform, data is processed as a stream, with the following components:

- Data ingestion and storage. The Einstein Platform reads data from your Salesforce organization, your Pardot accounts, and other sources of data. The ingestion is performed through public API endpoints, that support efficient bulk transfers of large quantities of data. Data is incrementally fetched from the data sources to the Einstein Platform, and is stored in a multi-tenant NoSQL document store.

- Data processing. Einstein Behavior Scoring uses RabbitMQ and the Celery framework to distribute processing tasks to a cluster of worker nodes. A worker node picks up tasks and may create more tasks, enqueuing them into RabbitMQ. The utilization of a work-queue approach makes it easy to monitor and scale out according to the current and projected load. Examples of tasks may include fetching data, building a predictive model, generating scores, and pushing data back to the customer organization in the Salesforce multi-tenant organizational CRM database. Internally, some tasks may store and cache data on a Redis instance. Zookeeper is used for some tasks that require synchronization.

- Deployment pipeline. The Einstein Platform contains first-class support for quick and easy development and deployment to production. Continuous Delivery (CD) and Continuous Integration (CI) are managed by a set of custom tooling, as well as Jenkins instances. We use Docker for packaging and delivering the binaries from development, through staging, data-testing, canary to production

- Platform services. Einstein Behavior Scoring uses the Einstein Platform’s API in order to onboard and configure customer organizations. The platform keeps track of the configuration in an RDBMS cluster. The platform provides useful services like orchestration of work; monitoring and alerting. We use Splunk for log collection and search, as well as for building custom monitoring dashboards.

See this diagram for a full picture of the various components and their relationships.

In the Einstein Platform, the work is separated into cycles, which are further broken down into cycle steps. For example, fetching data from the CRM org, fetching data from Pardot Accounts, and generating scores are 3 distinct cycles. A cycle processing begins with a worker (Batch Processing Services) calling into the orchestration service (Config Server) and picking up an org to work on. As explained above, the processing involves interacting with NoSQL document stores, an RDBMS and Redis. Subsequent cycle steps are enqueued into RabbitMQ and are dequeued by other workers. The results of the scoring process are pushed back to the CRM core. Auxiliary services like third party monitoring and alerting tools are also depicted, as well as parts of the CI and CD processes — Jenkins, ECR and Docker.

Monitoring Pipelines and Model Metrics

Every EBS customer has an active model powering their scores and insights. Models can be sensitive to new data and noise, so we would want to monitor model performance in real-time as well as in near real-time. To ensure that these models continue to perform well and provide quality scores and insights, we use a wealth of monitoring tools. In addition to tools for data scientists and engineers, we also have monitoring tools built specifically for product managers and DevOps to keep track of product adoption and system health.

We use tools such as local (per customer organization level) Jupyter notebooks to global (aggregated, cross-organization level) Jupyter notebooks as well as Splunk dashboards for monitoring.

Local notebooks are created or updated every scoring and training cycle. These notebooks are used by data scientists to check model metrics, scores and insights distribution for each customer org immediately after the cycle runs. Metrics such as forecast_horizon, model parameters, AUROC, AUPRC, cross validation stats such as precision, recall, f1 score and train-test curves, ROC and Precision-recall curves, cumulative gain curves and SHAP feature importance plots are visualized in the training notebooks while scores and insight distribution for all available models are captured in the scoring notebooks. The global Jupyter notebooks provide an aggregate view of model performance across all orgs with the ability to drill down into specific orgs for more details.

During the development cycle, engineers test their features in a staging environment using synthetic data that mimics the data in a customer’s organization. This allows us to identify, debug, and fix defects before deploying to production. Additionally, we’re also able to stress test the performance of our pipelines by scaling the synthetic data to arbitrary sizes and shapes.

In addition to the engineering team building EBS, our DevOps team monitors memory usage, CPU time, and the time taken for each step in the pipeline for all customer orgs collectively. The team is alerted when metrics exceed key thresholds.

Product managers have access to an Org Health and Customer Adoption dashboard to measure usage and adoption metrics such as daily/weekly/monthly active users, daily/weekly/monthly active organizations and the deltas in these metrics over time. This helps them to proactively reach out to customers before they experience any concerns with our product.

Conclusion

Einstein Behavior Scoring is a fundamental component of Pardot Einstein, empowering sales and marketing teams to pursue the right prospects by helping them identify those who are ready to buy and those who require more nurturing. Pardot Einstein was built on the rich experiences the team gained in building features in Sales Cloud Einstein. As our understanding of the challenges and solutions in this space has evolved, we’ve improved our solutions across all product lines.

For B2B Marketers, Einstein Behavior Scoring provides a more intuitive and accurate scoring experience, allowing many to remove the hat of keeping their manual, rules-based scoring model up to date to reflect their fast-pace, constantly evolving marketing efforts. As new engagement patterns arise, Einstein will learn them and adjust scores and insights automatically. This enables a strong level of confidence in identifying which prospects are ready to be passed to sales and which prospects require tailored nurturing. EBS also enables closer alignment between marketing and sales by surfacing the most important prospect engagement signals right where each team works.

For sales professionals, Einstein Behavior Scoring shows the critical prospect engagement patterns they need to know in order to prioritize the right prospects and tailor their messaging to drive better sales outcomes. Now sales reps know who is engaged, what they’re engaging with, and how they compare against all others.

Einstein Behavior Score is the fruit of the collective effort of multiple teams spanning across Engineering, Product, UX and Marketing. We’re particularly grateful for all the feedback that our Pilot customers provided to us along the way to help shape this product; without their valuable inputs, we wouldn’t have gotten this far.

We hope you enjoyed this technical deep-dive on the inner workings of Einstein Behavior Score and we’d love to hear your questions and feedback. Let us know in your comments below!