I18n. L10n. K8s. Kids these days are all about the numeronyms, am I right? Well, never one to be on time to the party, I’ve decided to coin my own: M10y. It stands for one of my favorite under-appreciated subjects: Multitenancy! (To be perfectly honest, I just got tired of typing “multitenancy” so much.)

If you’re not already familiar, M10y is the idea that a single software service can simultaneously play host to multiple different groups of users, who are completely isolated from each other. It’s been a key part of our success over the years here at S8e. (Get it? That’s “Salesforce”? … OK, fine, I relent.)

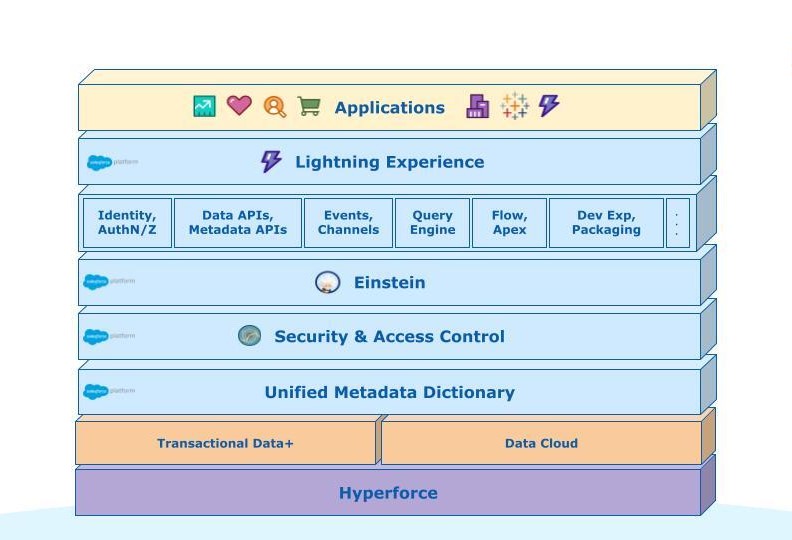

We built our technology stack from the ground up with software-level multitenancy, which means that we share physical infrastructure, but all customer data lives inside a strict logical container called a “tenant”. This approach has a bunch of benefits and trade-offs, which we’ll talk about in this post.

In a recent post, Igal Levy shared a bit about Salesforce’s infrastructure approach to multitenancy. In this post, we’re going to delve deeper into the architectural side. What does it really mean to be “multitenant”? And what are the principles that make it work well?

What is M10y, anyway?

Let’s start by getting clear on what we even mean by multitenancy.

Software is pretty much always used by multiple people. That’s the nature of software; programs are just bits you can copy, so people do. Thus, many people use the program at the same time, independently on their own computers. You and I don’t need different Tetris programs; we just run different copies of the same Tetris program, at the same time.

Some software (like Unix operating systems, or server-side web software) is simultaneously multi-user, which means the same running instance of the software can handle work for multiple users, at the same time. The software has to take care to not commingle the work of one user with another; it’s a pretty serious bug if it does. But, this isn’t rocket science, lots of software does this just fine. For example, while some of us were perfectly happy with Pine, these days billions of people use Gmail every day. But there aren’t billions of instances of Gmail running on Google’s servers — each running Gmail process is handling requests from thousands of independent users at the same time. But, everyone’s email is isolated from everyone else’s, regardless of which server handles their requests.

When we say multitenant, we mean something more than that. In a multitenant system, there are multiple groups of users using the system at the same time. These groups can interact with a pool of shared data and metadata … but not directly with data for other groups. Those groups (“tenants”) typically correspond to some organization in the real world (a corporation, non-profit, governmental agency, etc.), and the boundaries between groups are just as important as the boundaries between individuals in multi-user systems.

You can enforce multitenancy entirely at the software-level, like Salesforce’s Core architecture does (e.g. the system that powers Sales Cloud, Service Cloud, etc.); this usually works by creating a unique identifier for each tenant, and making sure that every user request, and every scrap of data in the database, is tagged with exactly one tenant id, and that every query you write against a database always adds that ID to its filter, like “WHERE organization_id = XYZ”.

Of course, software-level multitenancy isn’t the only option; VMs and containers can also separate workloads at lower levels just as well. In fact, some things that are tricky problems with software-level multitenancy (like per-tenant data encryption) become trivial in that approach. Could Salesforce run another way? Could we give each tenant their own instance, running on separate VMs? Sure! And it would have made for simpler software, too! So why don’t we?

The reason is utilization. By running a multitenant service, rather than many thousands of single-tenant services, we make better use of the computers that we (and, ultimately, you) are paying for. This is particularly important for the services you’re not using all the time. If every tenant had to have its own relational database running in a VM, then smaller orgs would be paying for keeping that server running for the 99.9% of the time when they’re not actively querying it, because they want that data to stick around and be ready for that moment when they do want to use it. The same goes for plenty of other services, too–big data row storage in HBase, event pub-sub in Kafka, and so forth.

Users would have to pay significantly more for Salesforce if we ran it this way (not to mention how much more harmful it would be on the environment). This may not be the ultimate answer for all time, given how fast technologies change (heck, Docker didn’t even appear on the scene until 14 years after Salesforce started …). But it’s our best answer today.

Let’s talk about a few of the principles we follow, to do M10y well.

Isolation: Leaky Boats Don’t Float

Multi-user systems hold one principle sacrosanct: other users of the system shouldn’t be able to see or change your data. This is so basic, it’s almost not even worth mentioning.

For a multitenant system, the same thing is true one level “up”: users of one tenant shouldn’t be able to see or change data for another tenant. (It’s specifically not true at the level of the individual user — after all, there are MAD, or “Modify All Data”, users in every Salesforce org with god-like powers over that org’s data.)

For security reasons, the walls between one tenant and the next must be completely airtight. It should border on impossible for one tenant to directly view or change any data for another — intentionally or not — except via authenticated connections through the product’s front door. It should be similarly difficult for a Salesforce engineer to write code that breaks this, even for “seemingly” good reasons. Trust is our #1 value, and nothing is more important to us than the security and safety of our customers’ data.

Of course, there are other practical reasons why clear logical tenant isolation is a good thing, besides trust. Clean separation of tenants makes a system easier to reason about, especially when it comes time to physically move a tenant from one set of infrastructure to another (as we’ll talk about below). If there’s shared mutable data across multiple tenants, you might have to go through complicated gyrations to figure out what to do when those tenants move around. (For example, imagine a table of cross-tenant statistics; do you recalculate it every time a tenant is moved?) Similarly, tenants should be isolated enough to be forgotten (i.e. dropped) independently as well, with no shared secrets or shared mutable storage.

Keeping a clear separation between tenants is more work for software-level multitenancy than other flavors. If the software is entirely single-tenant, and runs on a multitenant substrate (like AWS), there isn’t even a question of leakage between tenants, because there are no structures to do it.

Scale Down: Don’t Let The First Bite Eat Your Lunch

We mentioned the cost structure of serving our architecture above, so let’s delve into that a bit more.

It’s easy enough to understand the rationale for sharing physical resources in the abstract–e.g. the “if every single tenant had to run their own database” argument above. But realize that this isn’t just about the actual tenants in our production environments; it applies to everything, including the need for creating lots of small tenants for demos, development, staging, production tests, and customer sandboxes.

Why do these small tenants matter? There are lots of reasons. As Enterprise software, customers don’t (generally) just enter their credit card and start paying for a fully loaded system; for larger deals, we spend months showing the system to potential customers and building customized demos, to make sure that our systems are really the right fit for them. And, the shape of the sales funnel means you’re going to have a lot more demo environments than paid ones. So those had better be cheap!

Beyond that, even after a customer has bought our products, they are going to want to have a set of development environments (sandboxes), as well as testing and staging environments and … etc. This is a whole bunch of tenants that will mostly stay idle, which means our overall system will be dominated by mostly idle tenants. Thus, we need to take those idle tenants into account when doing cost planning.

This is where software-level multitenancy shines. A new Salesforce org requires no new tables, no new VMs, no new database instances … no new anything, other than a few hundred rows in a database (as we made clear a few episodes ago when we talked about our meta model). For all intents and purposes, these numerous, idle tenants are basically free. So, if you’re building a system with any other design, you need to figure out how to handle this case happily, without making the product too expensive to sell to anyone!

Scale Up: Pay More to Play More

In addition to scaling down well to small tenants, trials, etc., it’s also important to be able to scale up, to our largest customers. That means our tenancy architecture needs to give us a “slider” that we can move as far as any single tenant wants to move it, for a cost structure that still works well for all parties. Generally speaking, this means horizontal scale (adding lots of servers) is much better than vertical scale (buying really big servers) because the cost of big fancy servers carry higher price escalation.

As an example, Heroku’s architecture works this way by default, because it’s a layer on top of the already-scalable Amazon Web Services (AWS). Customers can choose to scale their Heroku app up to as many dynos as they’re willing to pay for (though doing that correctly is their responsibility, as we’ll see below).

In a software-based M10y approach, scaling up requires a bit more thought, because it’s fundamentally a relational database backed system (as we explained a few episodes ago, here). We put in a massive amount of engineering effort to enable these cases (which mostly goes unnoticed, as it should — after all, that’s our problem to solve, not yours.)

Along with scaling storage, we also need to consider scaling compute and network resources, too, and doing so in a way that provides fair allocation. Our customers should be able to get as much compute and storage as they need.

After horizontal scale, the key principle that enables the “slider” (from tiny sharded trials up to massive enterprise B2C scale) is the idea of portability, which we’ll talk about next.

Portability: Moving Shouldn’t Be Hard

With software-level multitenancy, there’s no escaping the fact that sometimes, tenants need to physically move from one place to another. Why? There are several reasons, including:

- Scalability. Sometimes, despite your best intentions, it’s not quite as simple as “pay more to play more”. You might have two small customers that used to happily coexist on the same infrastructure, but organic growth has made it so there’s not enough resources in that infrastructure to go around.

- Hardware Refresh. We’re in a constant cycle of retiring our oldest hardware stacks and building new ones that use the latest and greatest hardware available. When it’s time to move tenants off the old and onto the new, it’s easier to do this if tenants are individually portable.

- Product Interaction Latency. If you buy lots of Salesforce’s products, and you want those products to interact with each other in deep ways, then it may well be advantageous for them to be physically near each other (i.e. 5ms ping times, not 300ms ping times).

Thus, it’s super important that our tenancy architecture gives us the ability to redistribute tenants to new instances of the service. If you think about portability upfront, it can be a natural extension of multitenancy.

Of course, as data gets bigger, movement gets harder; data has gravity, and we’re not yet sending station wagons full of tapes around. There are ways to mitigate this problem, like building more of your systems on immutable primitives, which are easier to sync to a new location in the background over a long period of time (something we talked about a few episodes ago). Portability is secondary to scalability, in that we’d rather start with scalable systems, and then worry about moving tenants as needed.

Responsibility: Know Who’s Got The Ball

As a SaaS vendor, one of the fundamental deals we’re making with our customers is that below a certain line, things that go wrong are our problem, not theirs. (To use the pizza-as-a-service example, if the kitchen in a pizza parlor catches on fire, that is not your responsibility as a patron of the restaurant.)

The problem is, sometimes it isn’t clear who’s responsible for which messes. This is particularly true in the realm of service protection. One of the main challenges of any kind of multi-tenant system is resource contention: actions of one tenant can (potentially) step on another tenant’s toes. (This is true even if only lower layers, like your database or storage network, are shared.) So Salesforce’s systems have many (many) ways to ensure that the actions of one customer don’t overwhelm the system, via things like improper API integrations, poorly optimized SOQL queries, etc.. We have a wide variety of resource limiting frameworks in place to ensure this, and that’s something we enhance and improve continuously with every release.

There are other ways to draw this line, of course. For example, Heroku draws it lower in the stack, and gives customers a lot more control over what they build. But, the contract of responsibility is still very clear: if there’s a bug in your database connection string for something you build on Heroku, that’s your problem, not Heroku’s. Sales Cloud draws that line higher; the details of the service (like the specifics of database connection strings) are all hidden, so when something goes wrong at that level, it’s clearly our problem to fix it.

The point is, a good tenant architecture makes it very clear what is a tenant’s responsibility, and what is the platform’s. It’s a good idea to invest in levels of abstraction to keep that crystal clear. If a tenant can write custom logic or code (using platform features like Apex), then that code needs to be completely controlled by the customer, it needs to run in a 100% secure sandbox, and it needs to provide automated tests that the platform engineers can run to ensure they don’t break the customer-owned code. (Which is exactly what we do with Hammer tests.)

Independence: Don’t Take Away Your Own Tools

An interesting corollary of the previous point is that beyond making it clear who has what job, you should make sure that each group can actually, you know, do their jobs. And there’s a potential pitfall there, related to how you architect your multitenancy.

Every customer has different needs. In most cases, hopefully those different needs are handled by using something like metadata-driven customizability. But when they aren’t, sometimes a customer will ask you to change how the system works … just for them.

When we get this kind of request (which we do, a lot), the worst way to solve it is to fork our code. Why? Because it makes life harder for our engineering teams, which ultimately makes life harder for all our other customers. Instead of running one service, we’d now be running N slightly different services; and that harms our ability to innovate. Packaged software vendors spend 63% of their time on support, patches, and testing of old or custom versions, and that’s is 63% we don’t have to (or want to) spend.

Similarly, we don’t want to get into a situation where a single customer needs to control our code release schedule. If a customer’s needs can force us to stay on a certain software version for an arbitrary amount of time, or dictate release moratorium schedules, it causes a lot of extra complexity and maintenance, which is almost as bad as a code fork. (If versioning is built into the product (like with CRM Core’s API), that’s different; we maintain backwards compatibility as a feature.) A continuous deployment approach, with releases controlled by feature flags, is a better way to solve this.

It’s the same deal at the infrastructure layer; maybe a customer wants extra firewalls, or for us to use GPUs. But doing this is just as bad as creating code forks, because now there’s a unique environment we have to maintain and test and manage differently.

Instead, good multitenancy requires us to keep our engineering pipeline (shipping code, services, and infrastructure) independent of the needs of any one tenant. Just like the clean lines of responsibility for service protection, this clarity is essential if we’re going to keep innovating.

Part of the reason that code forking for individual tenants hasn’t been a bigger problem for CRM Core over the years is our platform; it gives customers an “escape hatch” when the system doesn’t behave exactly as they want it to, and because it lets third parties (ISVs) come in and scratch the itches we don’t scratch.

The key point is: multitenant systems can’t trade off engineering agility to answer the needs of a single tenant; it’s always a losing bargain.

Conclusion

There are many aspects of our architecture that have contributed to our business success over the years, but Multitenancy is among the most important. And, as we’ve grown and expanded, we’ve actually moved into additional forms of M10y too, like Heroku’s (Linux containerization on top of AWS) and Commerce Cloud’s (dedicated physical servers for each of their tenants’ large, high-traffic B2C commerce sites); we’ll talk more about those in future posts.

Thanks to all those who helped with this post: Alan Steckley, Bill Jamison, Christina Amiry, Darryn Dieken, David Mason, Eugene Kuznetsov, Jeanine Walters, Phil Mui, Scott Hansma, Walter Harley, and Walter Macklem. And of course, kudos to our own Mr. Pat Helland, whose Condos and Clouds was a big influence on my thinking on this subject.

Follow us on Twitter: @SalesforceEng

Want to work with us? Let us know!