In our Engineering Energizers Q&A series, we highlight the engineering minds driving innovation across Salesforce. Today, we spotlight Gloria Tumushabe, Senior Software Engineer on the AI Cloud Infrastructure Team, which built Luminary — a centralized automation platform governing release workflows across AI Cloud and now supporting more than 100 services.

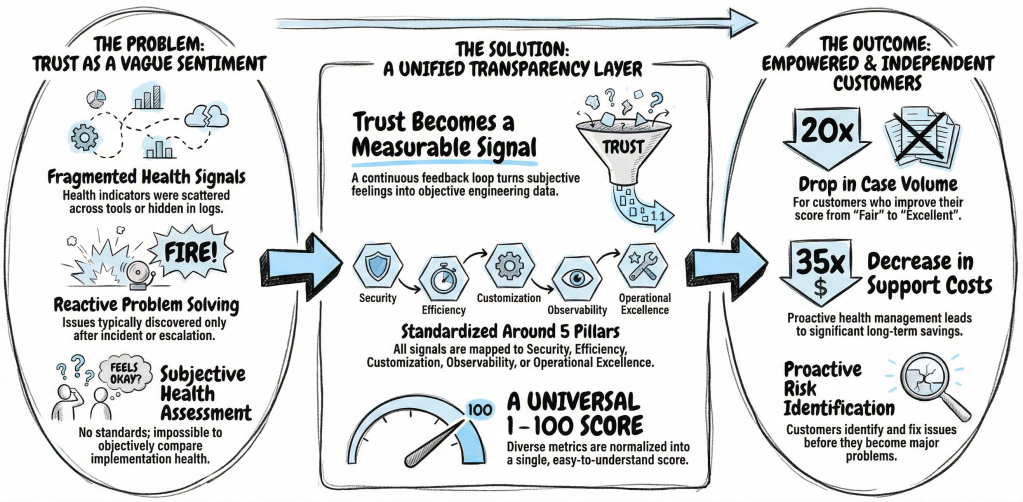

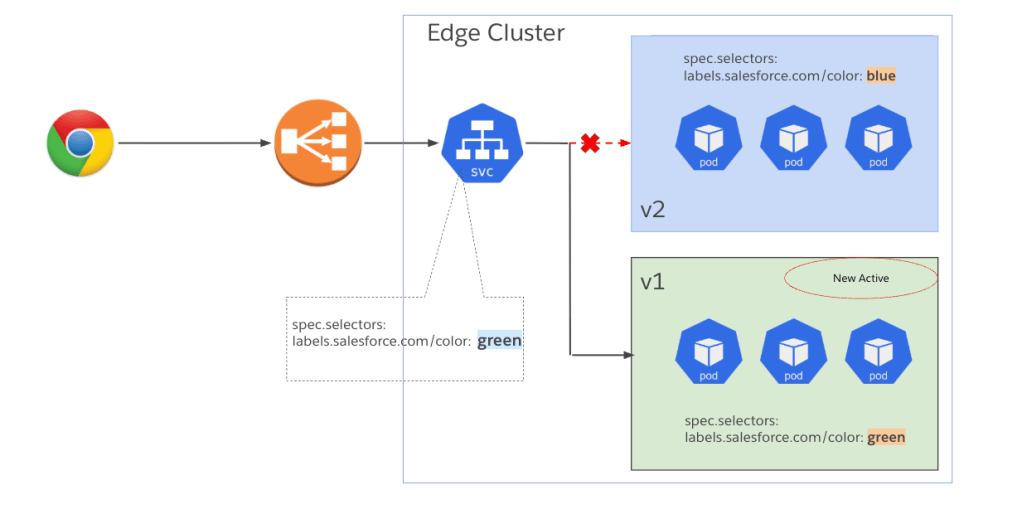

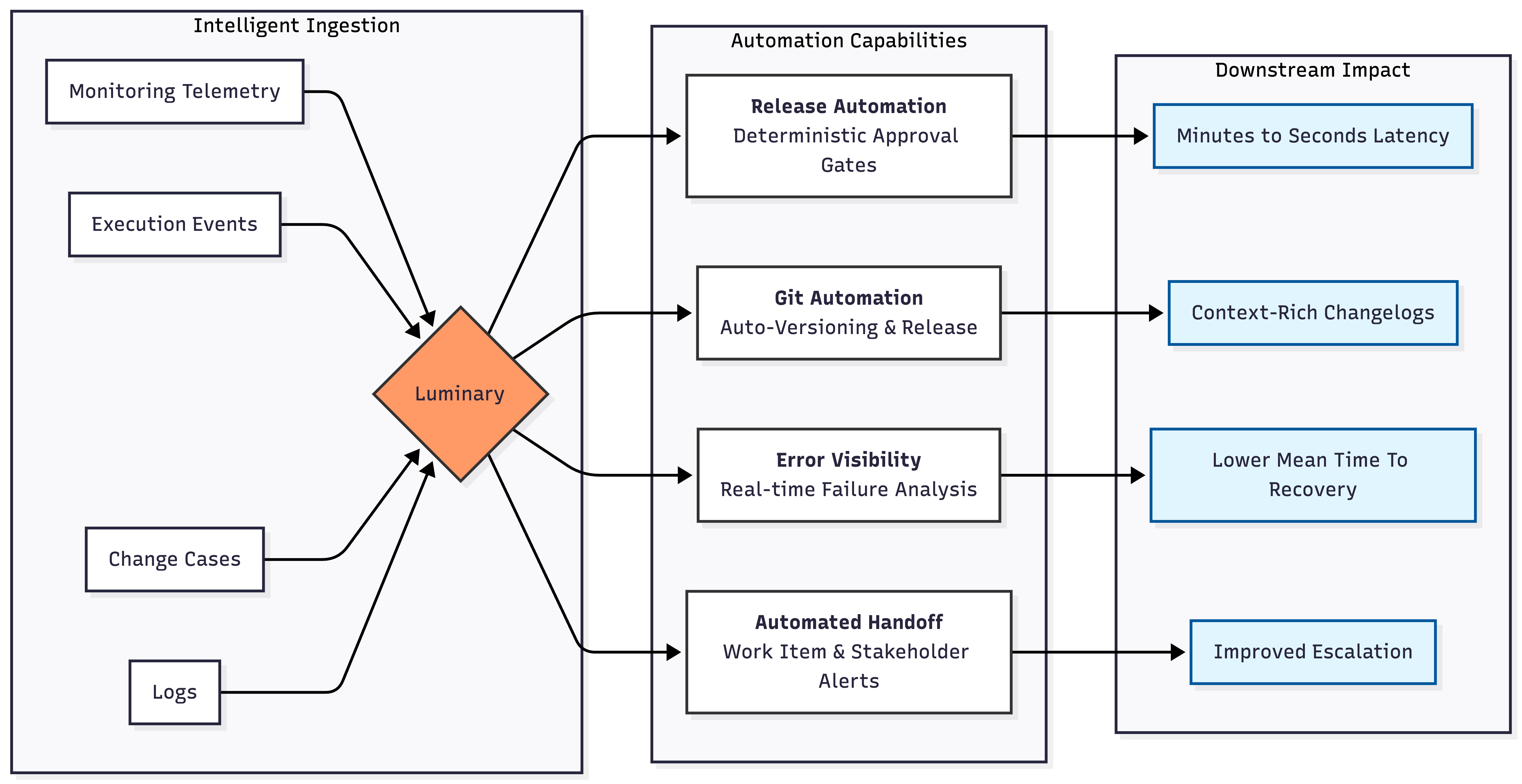

Explore how the team eliminated human-gated release approval latency by replacing manual verification with a deterministic approval engine inside Luminary, enforced production readiness across deeply interdependent services through automated dependency validation, and transformed fragmented operational workflows into a scalable release control plane.

Luminary automation capabilities.

What is your team’s mission in building the Luminary automation platform within Salesforce AI Cloud?

We remove manual operational friction from the AI Cloud release lifecycle and replace it with deterministic automation. Historically, release approvals, environment transitions, artifact creation, and readiness validation required engineers to coordinate across multiple dashboards and Slack threads. This fragmentation introduced human bottlenecks and increased the probability of missed signals. Ensuring that a deployment wouldn’t break the ecosystem often took hours to days because release managers had to manually coordinate across fragmented dashboards and Slack threads to verify readiness.

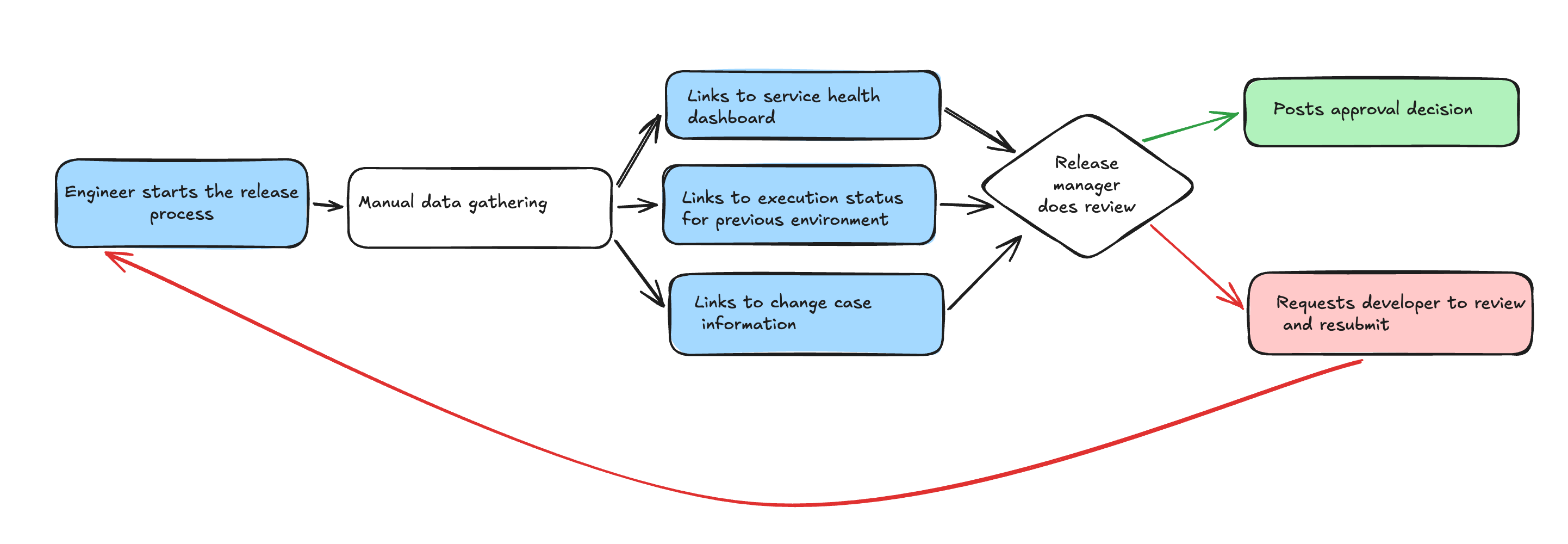

How the release process appeared prior to Luminary.

Within Luminary, we established a release control plane that validates production readiness before any code promotion occurs. Instead of relying on reactive human checks, Luminary evaluates SLO availability, PagerDuty escalation configuration, FIT results, and dependency health automatically. Operational criteria now function through programmatic enforcement rather than manual interpretation.

Beyond approvals, Luminary orchestrates Git release tagging, GUS work item creation, and service detection across FIs while providing real-time visibility into release states. Centralizing these workflows reduces cognitive load and raises the reliability bar. This approach achieves controlled velocity by accelerating AI feature delivery without increasing production risk.

Gloria explains why engineers should join Salesforce.

What human-gated release approval latency constrained deployment velocity within AI Cloud?

Human-dependent approval throughput created a significant constraint for the team. Service owners entered a waiting pattern while release managers manually verified FIT dashboards, SLO metrics, and dependency readiness. As adoption increased, deployment velocity became limited by reviewer capacity rather than engineering readiness.

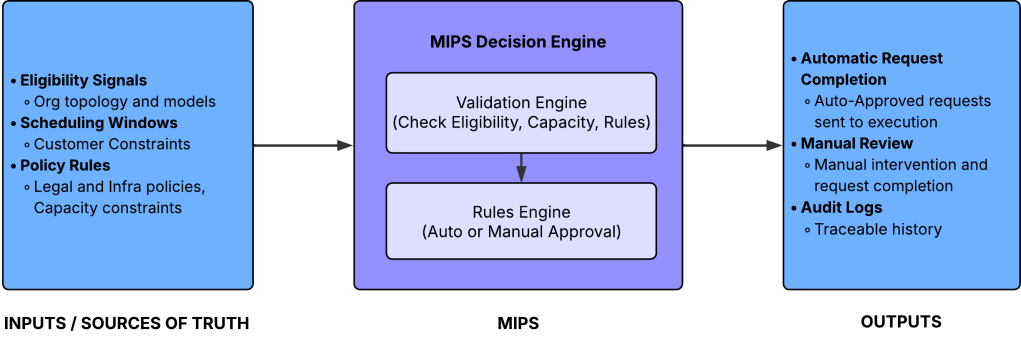

To solve this, the Luminary team engineered an automated approval engine that executes verification logic programmatically. The system pulls real-time availability telemetry from Argus, validates FIT results across the service boundary, and confirms escalation policies before granting promotion. This reasoning layer runs instantly inside Luminary’s Slack workflow to eliminate queue-based coordination.

This shift converted a serialized, human-gated process into a self-service model governed directly by Luminary. Engineers now receive approval the moment readiness thresholds are satisfied. While escalation paths remain for exceptional cases, Luminary governs the routine path end-to-end. Consequently, release velocity scales with system health rather than reviewer availability.

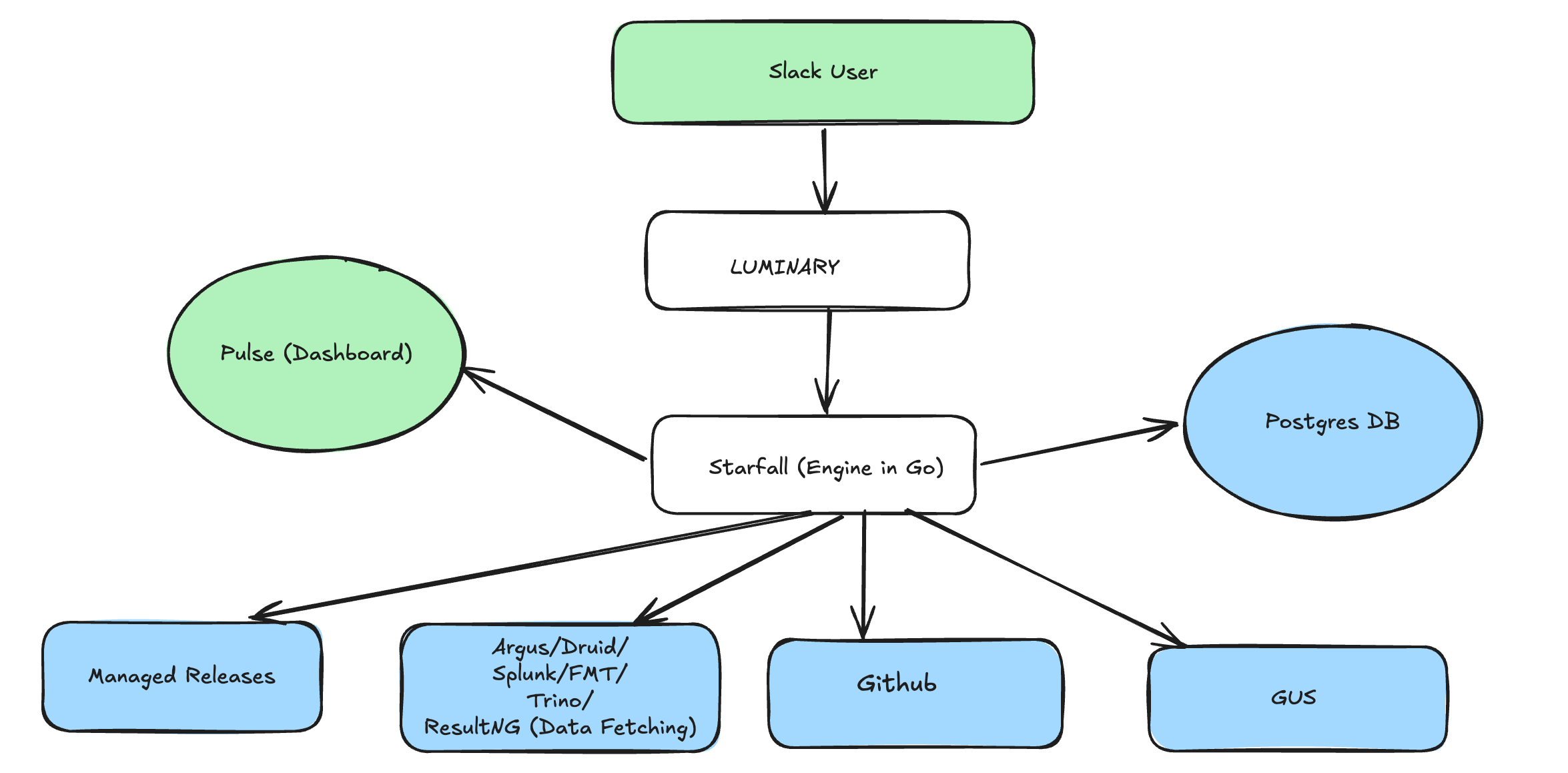

Luminary architecture.

What cross-service dependency visibility constraints required automated production readiness validation?

In the AI Cloud ecosystem, a service could look healthy while its upstream and downstream neighbors crumble. Previously, release managers wasted hours cross-referencing dashboards to find the truth about system readiness.

Luminary now embeds automated dependency validation into the promotion workflow. The system scrutinizes live availability signals and FIT results across the entire dependency graph before any release moves forward. Promotion occurs only when every linked service meets specific readiness thresholds.

This shift treats production readiness as a collective property of the environment. Deterministic automation replaces the tedious manual aggregation of data. This approach mitigates operational risk and maintains deployment speed as system complexity increases.

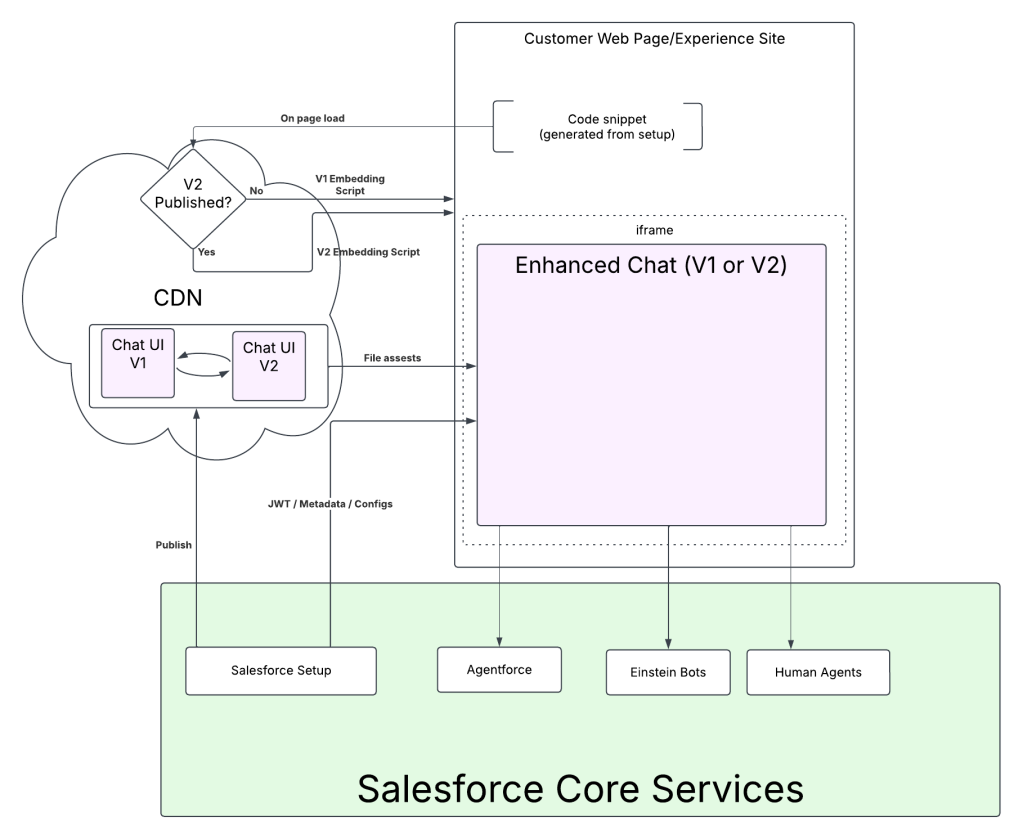

What deployment state detection constraints required event-driven promotion workflows?

Manual polling used to stall the handoff from stage to production. Engineers typically checked Managed Releases after a stage deployment finished and then returned to Slack to resume the approval process. This stop-and-go method forced constant context switching and created unnecessary delays.

Luminary now integrates with FUN execution events to track deployment transitions as they happen. The system detects when a stage execution completes and automatically triggers the next approval phase. This same event-driven logic manages the transition from production to GIA.

Release promotion now flows without interruption because Luminary drives the workflow using telemetry instead of human observation. Automation maintains this momentum and keeps full control across every environment boundary.

What release traceability gaps drove automated Git tagging and GovCloud handoff workflows?

Manual Git release tagging once created a significant tracking gap. Engineers need to know which commits reached production and which execution is associated with a particular incidents. Manual tags sometimes lack the required metadata or links to specific executions.

Luminary now automates artifact generation to bridge this gap. The system aggregates commits since the last release, builds structured release notes, and attaches execution references to Git artifacts. Programmatic creation of major, minor, and patch releases ensures the system enforces traceability.

The release automation also extends to promotion of changes to GovCloud. Luminary verifies approved Change Cases and generates populated GUS work items for the GIA channel. These workflows now govern high-stakes handoffs, which eliminates artifact drift and speeds up secure promotions.

Gloria shares what keeps her at Salesforce.

What multi-service configuration diversity required centralized automation state management?

AI Cloud spans over 100 services with different dependencies, SLO definitions, and testing standards. Managing these varied configurations requires a unified source of truth to maintain accuracy.

Luminary utilizes a centralized Postgres state engine to track service metadata, dependency maps, and escalation policies. The system pulls authoritative data from Monitoring Cloud and updates workflow states programmatically. This removes the need for manual configuration during the approval process.

Luminary manages both permanent service attributes and shifting telemetry within its structured state model. Workflows adapt as services evolve while engineers maintain a consistent Slack interface. Centralized state management allows Luminary to handle diverse architectures while ensuring every deployment remains correct.

What concurrency and stability constraints shaped Luminary’s background automation architecture?

Early versions of Luminary used a Flask API server to handle both Slack logic and background processing. As automation grew to include telemetry synchronization and scheduled jobs, resource contention threatened system stability.

The team re-architected the system by introducing Starfall. This Golang-based engine handles database synchronization and cron-driven tasks. This change decouples heavy background operations from real-time Slack workflows. Postgres acts as the centralized decision-state backbone while Starfall maintains data freshness asynchronously. This separation keeps the system responsive during high concurrent loads.

Luminary has enabled nearly 600 release workflows across AI Cloud. The system acts as a gatekeeper by escalating failed validations and readiness checks for manual review. Healthy releases that once required lengthy coordination now complete in seconds. This architecture scales reliability and velocity while maintaining operational safety.

Learn more

- Stay connected — join our Talent Community!

- Check out our Technology and Product teams to learn how you can get involved.