In our Engineering Energizers Q&A series, we highlight the engineers building the systems that power Salesforce’s most advanced platforms. Today, we spotlight Utkarsh Jain, a senior software engineer at Salesforce, who develops the Connections capability within Agentforce to transform how AI agents deliver information by moving beyond plain text to support richer, interactive experiences that already power more than 4 million sessions.

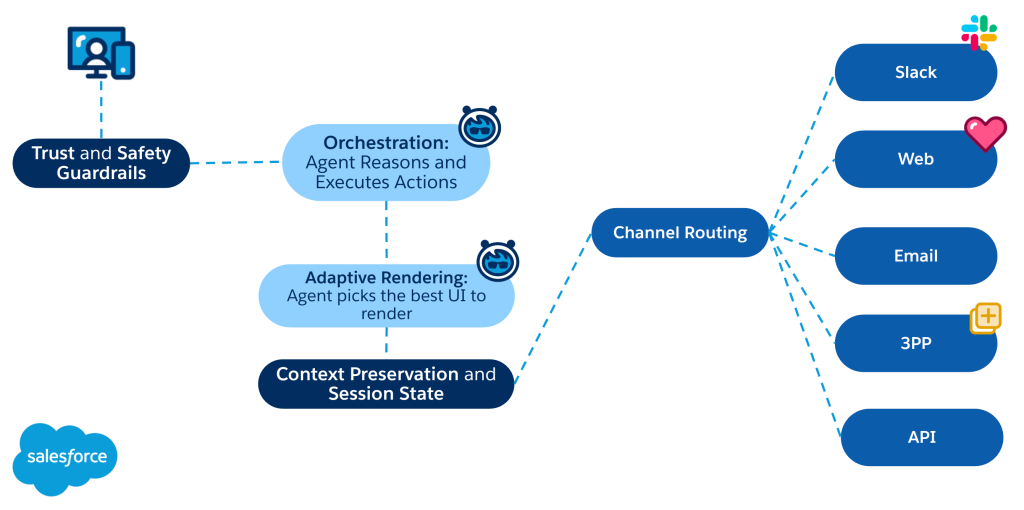

Explore how Utkarsh and his team engineered a system that allows Agentforce agents to render structured UI components directly from LLM responses by solving the challenge of selecting rich UI over plain text, building a conversion system for structured interfaces, and integrating these capabilities into agent infrastructure while preserving conversational context.

What is your team’s mission in building the Connections feature for Agentforce?

Our team improves how AI agents share information by enabling responses that move beyond plain text to support interactive experiences. As conversational AI systems grew, many interactions still relied on large blocks of text to guide users. This often made complex workflows difficult for people to navigate.

Connections solves this by allowing Agentforce agents to render structured interface components directly within a conversation. Instead of describing choices through text, the system presents interactive elements that help users finish tasks efficiently. For instance, an airline booking might require seat selection. Rather than asking a user to type a seat number, the agent shows a visual seat map for direct selection.

To make this possible, the team builds the infrastructure that generates these interfaces dynamically from AI responses. This ensures the components remain compatible with the conversational systems that power Agentforce.

What technical challenge did the team face enabling AI agents in Agentforce to dynamically render rich UI components instead of returning plain text responses?

One of the first challenges involved determining when a response should convert into a structured interface. During early development, the system frequently over-formatted responses, which meant even simple answers like “yes” or “no” appeared as UI components.

This approach created usability issues because users often need the flexibility to respond beyond predefined options. Restricting interactions to fixed selections can limit the conversation, while large sets of options can make an interface difficult to navigate.

Through experimentation, the team established clearer boundaries around when structured UI actually improves the experience. Limiting the number of options within structured components helped stabilize the interaction. Ultimately, the challenge shifted from simply converting text to UI to determining when a structured presentation provides a genuine benefit.

What engineering challenges shaped the system that converts raw LLM outputs into structured UI components for AI agents in Agentforce?

Transforming LLM outputs into structured UI components proved more complex than the team initially expected. While early assumptions suggested consistent behavior across use cases, results varied depending on the specific interaction and required interface.

The system requires a mechanism to interpret model responses and convert them into structured message formats. This allows the client interface to render UI components by translating conversational outputs into structured representations while preserving the original intent.

Ensuring the system supports multiple industries and interaction models presented another hurdle. A formatting approach for airline bookings might fail for other services. Therefore, the system must support various structured formats as a general platform capability rather than a single-scenario solution. Today, this capability supports more than 133,223 agents generating surface-enabled experiences across Agentforce deployments.

How Agentforce preserves context and renders rich UI for the best customer experience.

What architectural constraints shaped the runtime layer that determines how an AI-generated response in Agentforce should be rendered?

The runtime layer determines whether an agent response remains plain text or renders as a structured interface. This decision directly impacts how easily people use the interaction.

Converting too many responses into structured components makes the interface restrictive or complex. Conversely, keeping everything as text removes the benefits of interactive experiences that simplify choices. To solve this, the team refined prompting strategies through repeated experimentation, creating a fine balance between what to convert to rich UI, and what should be kept as text. Testing different prompts allowed the team to achieve consistent rendering results.

Because Agentforce supports many scenarios, the system functions across various domains. The orchestration layer focuses on evaluating the nature of the response to decide when a structured interface provides the most value.

What safety and reliability concerns had to be addressed when using an LLM to transform AI-generated responses into structured UI content?

Producing structured responses with an LLM introduces specific reliability concerns. Formatting decisions must not change the meaning of the conversation or restrict user choices. This is really important for enterprises.

Converting a response into a fixed set of options can limit what a person says next. If the selections fail to capture intent, the interaction feels constrained. Usability also suffers if the model generates too many options, which can clutter the interface or cause visual errors.

The team established guardrails for structured responses to address these issues. These safeguards ensure rich formatting improves the interaction without creating friction or limiting the conversation.

What difficulties emerged when preserving conversational context after users interact with AI-generated UI elements such as carousels or selectable components?

Structured UI interactions introduce a challenge for maintaining conversational continuity. When a person selects an option or interacts with a UI element, the system must translate that action back into language the agent understands.

Each interaction becomes a new input for the agent to interpret. Poor handling of this transition causes the agent to lose context or generate misaligned responses.

The system maps interactions with structured components back to the conversational state. This process allows the dialogue to continue naturally while preserving important context.

What integration challenges arose when introducing AI-driven response rendering into existing Service Bot infrastructure?

A key challenge required linking directly with current agent frameworks instead of functioning as an isolated setup. Agentforce already handles conversational workflows, so the system incorporates structured response rendering into that framework to maintain steady interactions.

The platform also accommodates diverse user experiences. Various industries demand unique structured layouts — such as choosing seats for flights or specific designs for booking rides. Consequently, development centered on uniform rendering for structured data while permitting individual services to customize their interaction styles. Currently, over 1000 organizations utilize this feature. This deployment shows how surface-enabled interactions broaden the agent platform and preserve harmony with established conversational systems.

Learn more

- Stay connected — join our Talent Community!

- Check out our Technology and Product teams to learn how you can get involved.