In our Engineering Energizers Q&A series, we highlight the engineering minds driving innovation across Salesforce. Today, we feature Rajashree Pimpalkhare, SVP of Software Engineering, Field Service, and the team responsible for voice-to-form data capture in the Field Service Mobile application, which delivers AI-powered mobile experiences to a field workforce supporting hundreds of thousands of active technicians each month.

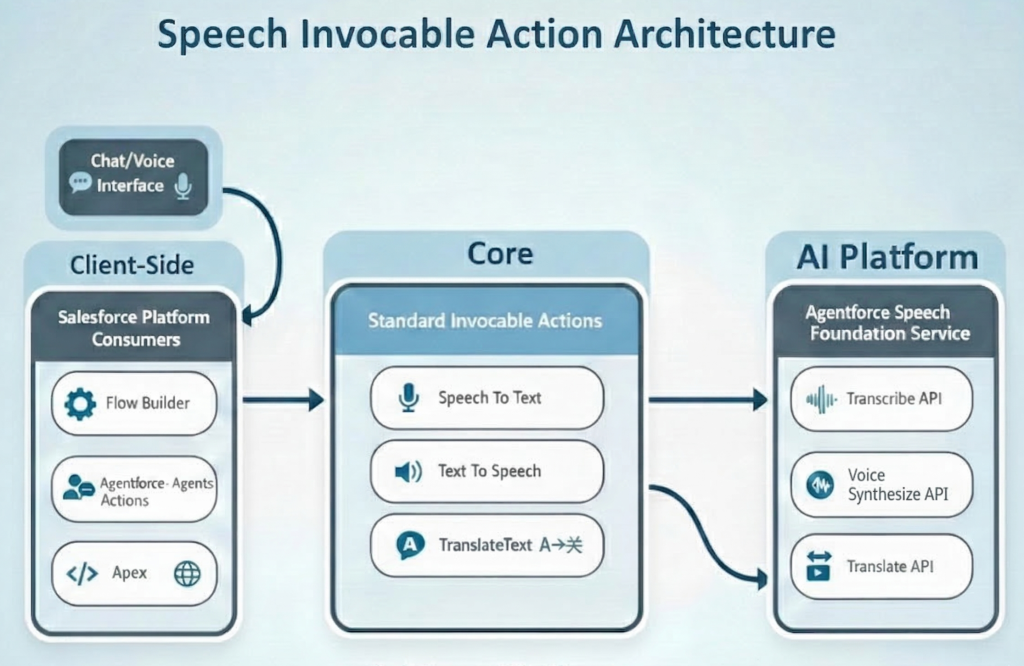

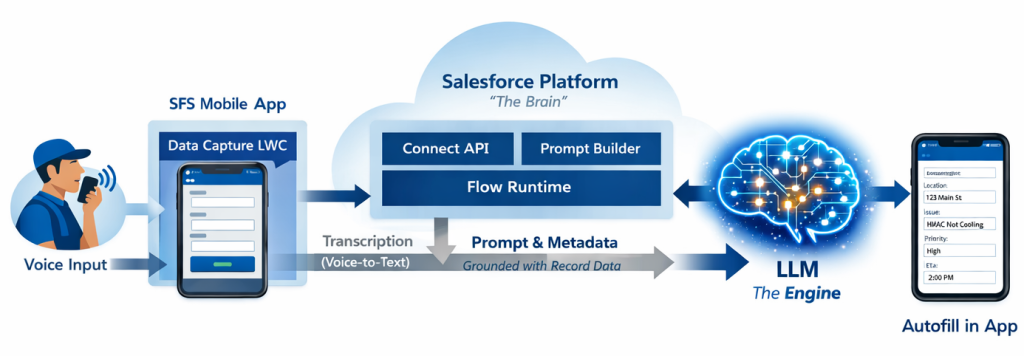

Discover how her team developed a hybrid on-device and cloud architecture to accurately translate unstructured voice input into structured form data at an enterprise scale, ensured reliable performance across various accents and noisy field conditions through real-world voice testing, and managed latency, cost, and privacy by keeping speech-to-text on the device while leveraging cloud LLMs for intelligent field mapping.

AI-driven data flow process diagram.

What is your team’s mission as it relates to building voice-to-form data capture for the Field Service Mobile application?

Our mission focuses on streamlining field work. We empower technicians to capture data quickly, safely, and accurately using natural voice interactions. Field technicians often work in environments where traditional data entry is difficult, such as when wearing gloves, handling equipment, or in dangerous locations. This makes voice a more effective way to input information.

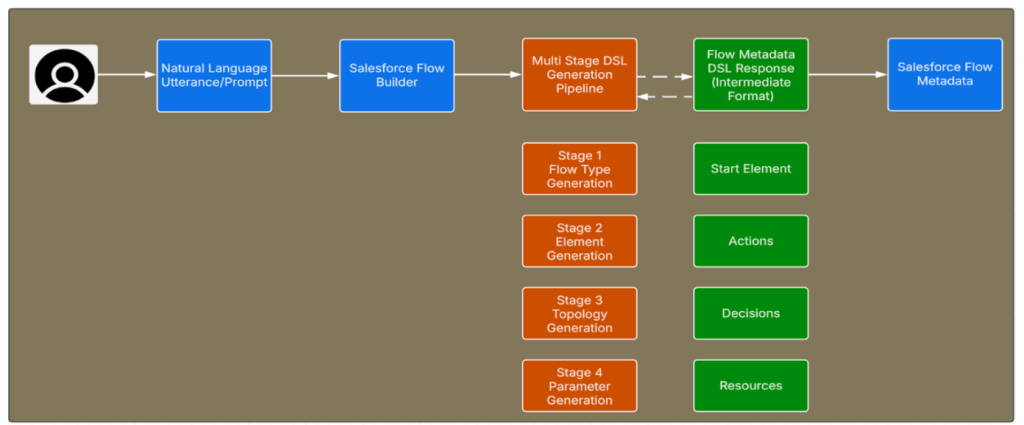

From an engineering standpoint, our mission goes beyond simple speech recognition; it involves intelligent data capture. Technicians provide a natural summary of their work, and the system directly maps that input to structured form fields. Form structures, field semantics, and technician language differ significantly across customers and industries. Therefore, this mapping requires semantic understanding, not just deterministic parsing. Without AI-based semantic reasoning, this method would depend on rigid, form-specific rules, which would not scale across various industries or schemas.

Voice-to-form is a core feature within Field Service Mobile. It integrates directly into existing record editing and form workflows. This approach allows for gradual adoption without introducing new interaction models or requiring user retraining. The outcome is a production-grade experience that enhances efficiency while meeting enterprise demands for accuracy, reliability, and trust.

What accuracy constraints did you encounter when mapping unstructured voice input into structured form fields at enterprise scale?

The central accuracy challenge involved converting free-form speech into correctly populated, structured fields. This task spanned diverse industries, form designs, and technician speaking styles. Technicians commonly use domain-specific terminology, abbreviations, and relative date references. The system must interpret these accurately within each field’s data type and format.

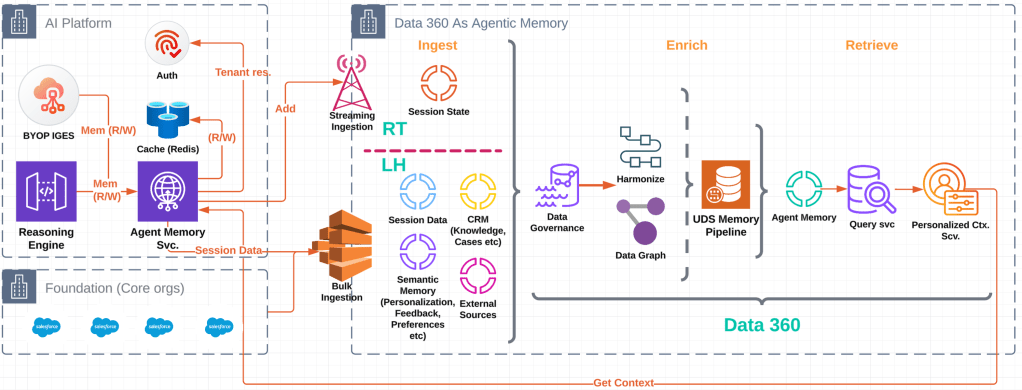

As the number of form schemas increases, deterministic approaches would demand per-form logic to manage overlapping field names, varying data types, and context-dependent references. This quickly leads to a combinatorial maintenance issue. To resolve this, the team developed a hybrid architecture. This combines on-device speech-to-text with cloud-based large language models for semantic field mapping. Each request incorporates schema-driven metadata — field types, constraints, examples, and formatting expectations — encoded directly into the prompt alongside the user’s utterance. This avoids relying on post-processing heuristics.

AI proved to be the only practical method to generalize intent resolution across hundreds of form variations without hardcoding logic. The team validated accuracy through iterative testing. This involved various device classes, form sizes, and real-world noise conditions, utilizing a growing collection of authentic technician utterances. Evaluation focused on correct field assignment and valid value population, achieving 85% field-level accuracy, which serves as a robust production baseline.

What reliability constraints emerged when supporting diverse voices, accents, and noisy field environments across real technician workflows?

Reliability challenges arose from the varied conditions in real-world field environments. These included differences in accents, speech cadence, vocabulary, and background noise from traffic or machinery. Such conditions can create inconsistency if not specifically addressed in both architecture and testing.

The team established reliability engineering in real-world conditions by creating a Voice Utterance Library. This library contained authentic technician voice clips captured during field ride-alongs. They systematically combined these utterances with various noise profiles and replayed them through the entire pipeline. Failures were categorized based on whether errors originated in transcription, semantic interpretation, or field assignment. This allowed for targeted refinement and made AI behavior observable rather than opaque.

On-device transcription, utilizing native iOS and Android speech frameworks, provided consistent performance in mobile environments. When transcription quality fluctuates, technicians can review and edit the text before processing. This prevents low-confidence inputs from spreading into structured records. This layered strategy ensures reliable performance across diverse field conditions.

What latency constraints shaped how you balanced on-device speech-to-text with server-side text-to-form processing for voice workflows?

Latency directly affects usability in the field. Technicians expect quick feedback, even when network conditions vary. The team needed to minimize perceived delay while still using cloud intelligence for semantic understanding.

The architecture separates transcription from semantic processing. Speech-to-text operates entirely on the device. This removes network dependency and provides predictable performance. Only the resulting text and metadata transmit to the server for field mapping. This reduces payload size and avoids audio transmission. This separation ensures AI inference applies only where semantic reasoning is necessary.

The system completes a single server round-trip for text-to-form processing. This avoids compounding delays. A review step allows technicians to edit transcriptions before submission. This adds a quality gate without stopping progress. Together, these choices enable end-to-end completion in under 15 seconds. This preserves responsiveness in real-world conditions.

What user-experience constraints guided the design of a voice workflow for non-technical field service technicians?

The main UX constraint was simplicity. Field technicians complete jobs under time pressure. They do not experiment with AI. The voice workflow needed to be discoverable, intuitive, and require minimal explanation. It also needed to avoid introducing chat-style interfaces.

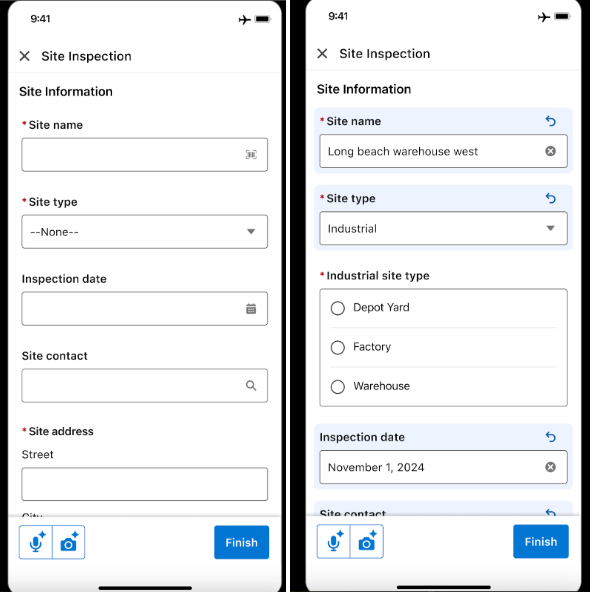

Voice input embeds directly into existing form experiences. Technicians start voice capture with a single control. They speak naturally without referencing field names. After processing, updated fields visually highlight. Inline undo and text editing controls keep users in full control. This transparency is critical when AI modifies structured records.

Privacy considerations also influenced UX decisions. No voice recordings store. Audio discards immediately after transcription. Extensive beta testing with enterprise customers confirmed that technicians preferred transparency and correction over silent automation. This resulted in a voice experience that feels native to the workflow.

Voice-powered data entry, natively integrated into existing workflows.

What cost-to-serve and privacy constraints influenced the decision to perform speech-to-text on the device?

Cost and privacy were core architectural limits. Cloud-based transcription would create recurring costs. It would also increase exposure of sensitive audio data.

By performing speech-to-text on the device, using native OS frameworks, the team removed transcription costs entirely. They ensured audio never leaves the device. Once transcription finishes, the audio immediately discards. Only text processes further. This simplifies compliance by avoiding storage, retention, and audit requirements for raw audio.

Text-to-form processing uses existing cloud LLM infrastructure. This minimizes incremental platform cost. It also retains flexibility. Processed data retains only as needed to populate the form. This ensures AI applies where it adds semantic value. The rest of the pipeline remains deterministic, cost-efficient, and privacy-safe.

Learn more

- Stay connected — join our Talent Community!

- Check out our Technology and Product teams to learn how you can get involved.