Hello, the internet! Today on The Architecture Files, I’m going to delve into a subject that doesn’t get much love: software configuration, and its slippery nature.

If you’re not familiar with “configuration”, the term usually refers to the parameters and initial settings for a piece of software. Just about every project has some of it. For small systems, it’s no big deal — just a few lines in a text file! But as your software grows, and spans many environments and situations, configs can quickly get out of control … and even cause problems. (If you’ve never experienced that, either you’ve never worked on a large system, or you’ve been exceptionally lucky.)

The basic ideas of configuration are rarely explained well. And as Wikipedia says, “There are no definitive standards or strong conventions”, so the whole subject can be very confusing. In this post, I’ll outline the core behaviors of configuration. (None of this is particularly specific to Salesforce, but I’ll cover a bit at the end how we make use of some of these ideas.)

Configuration: A Spectrum

I used to think software was composed of two halves: Code (the instructions you’ve written for a program); and Data (the values supplied at runtime by users, to be displayed, saved, or whatever).

It turns out, life isn’t that simple. Instead of a clear-cut division, it’s really a spectrum between things that are more static (i.e. harder to change) and more dynamic (i.e. easier to change):

On the left, code is usually changed slowly, by humans, in a controlled path with verifications for correctness. On the right, data is changed every second, by users, to record things in the world, for fun or profit.

And in the middle? You get configuration: material that can alter or constrain the behavior of the code. Configuration can look more like code or like data, depending on how you treat it.

Configuration values are stored separately from the main body of a program, which allows them to change independently. Why is that important? Consider this example:

int threadCount = 10;

if (myDataCenter = “ABC”) threadCount = 30;

createThreadPool(threadCount, PopulationStrategy.Eager);

See any problems with this? It’s a little confusing, for one thing; the logic about where each value comes from is hard to follow. It’s not obvious which parts are safe to change, and which are not. That limits who can change them; updates to these values have all the risk and complexity of changing the code around them.

Moving values to be managed as configuration allows the value to be chosen independently of the code:

Configuration File:

threadCount:

Default: 10

DC=ABC: 30

populationStrategy: Eager

Code:

createThreadPool(config.get(“threadCount”), config.get(“populationStrategy”));

You can see the benefit we’ve gained by separating out the “knobs” from the machinery of the code: both are easier to read and update.

Configuration is (generally) less expressive than code, and that makes it simpler to reason about and test the code independently. It allows the behavior of a system to be changed within well established “guardrails”; you can write and test the code, and verify that it will work with whatever values are supplied (or fail in a fast and obvious way that says “Hey, your configurations are messed up!”).

Configuration can also allow the code’s behavior to be changed by someone other than its author. This might be because one team builds the software and another team deploys and runs it; or it might be so business folk can decide how to, say, price a certain product without talking to engineers every time.

Configuration isn’t a cure-all. In fact, if used unwisely, it can add substantial complexity and risk to your system. Like any other part of software engineering, you kinda need to know what you’re doing.

The Five Axes

So, configuration is “material that constrains or alters the behavior of code”.

Simple, right?

Not quite! There are actually several decisions to make about how you store and use your configurations. If you lump them all together, things get pretty confusing. Let’s break them out and look at each in turn. There are 5 axes that you probably care about:

- Effect: Internal or External?

- Lifecycle: Static or Dynamic?

- Source Of Truth: Files Or Data?

- Scope: Where does this apply?

- Ownership: Who decides?

Effect: Internal or External?

Configuration determines how code should behave; think of it as a “fill in the blank” about some aspect of the code’s functionality at runtime.

There are two big classes of behavior:

- Internal: related to a code’s internal function, in isolation;

- External: related to a code’s place in the larger world, or its communication with others.

Internal values point “inside” the code; they include things like:

- default levels and limits, e.g. for the size of a thread or connection pool;

- options that affect performance, like flags for memory usage and GC;

- enablement or disablement of a code path, like a feature or new version;

- choice of alternate algorithms, such as for encryption (DES, AES, etc);

- rules for complex behavior, such as alerts (“only fire if this happens consistently for 5 minutes”)

- any other magic strings and numbers; etc.

Semantically, there’s nothing you couldn’t put into an internal configuration value; these are just some examples, but the sky’s the limit. They’re frequently scalar values (e.g. a single atomic integer, string, etc), but they can also include lists, maps, pointers to other values, etc.

External (aka “topological”) values, on the other hand, point “outside” the code. They could include:

- references to external service dependencies (e.g. a DB connection string).

- references to other machines that in the same cluster or role;

- pointers to local machine resources, like file system paths for log files;

- secrets or other authentication material for secure communication; etc.

Both internal and external values are intended to change independently of the code; the difference is that the former (internal) are generally things that the owners of this application can control, whereas the latter (external) depend on “reality”, i.e. things out there in the rest of the world, or agreements with others.

Lifecycle: Static or Dynamic?

Configurations are meant to change independently of the code (that’s kind of the point, right?). But when does that change occur, exactly? There are two main choices; a configuration change can either be:

- Static: coupled with the lifecycle of the application, requiring a redeployment or restart in order for changes to take effect; or

- Dynamic: independent of the lifecycle of the application, able to change at any time.

Neither of these is right or wrong; the former is simpler, and the latter is (usually) faster.

Static configuration changes are simple because they work the same way code does, and they don’t require any extra diligence or thought. Got a new config value? Simply check it in, deploy it, and restart the application, just like you would for a code change. Simple.

Conversely, dynamic configuration changes are attractive because they’re fast. In cases where you want (or need) the change to be atomic, or controlled by some other factor, this is the only option. This is common behavior for feature flags; you want them to be nearly instantaneous across a large number of servers without having to bounce processes.

However, with that speed comes additional complexity. You have to ask:

- How fast is the change going to be recognized? Seconds, minutes, or hours? Is it globally instantaneous, or eventually consistent (e.g., if values are cached or pushed out by automation)?

- How isolated are reads? Things can get weird if values change halfway through an execution.

- Is your application capable of reacting to the change consistently? For example, if some Java code retrieved the value once and stuffed it into a static variable, different parts of your application may now see different values when the value changes. This is avoided with static configs that you redeploy.

Dynamic configuration changes are intriguing, but tricky.

There’s a correlation between effect (internal vs external) and lifecycle (static vs dynamic): external information (about topology, peers, and other services) generally works much better when it’s dynamic. If you make it static, then every change in the external world has to be manually mirrored in the local copy and deployed. Instead, a common pattern for extracting dynamic, external configuration data is Service Discovery (you don’t store those values as configs, you just look them up at runtime).

When asking which way your configuration should work, consider the frequency with which the value normally changes. Is it “set and forget”? Make it static. Should there be daily updates, in response to traffic? Make it dynamic.

As we’ll see next, the lifecycle is also closely related to how you store the values.

Source Of Truth: Files Or Data?

Just like there are two approaches to the lifecycle of changes, there are also two main (“happy”) ways to store the source of truth for configuration data:

- as files under source control, in an SCM (like Git); or

- in a live data store, like a database.

The source of truth is directly related to a value’s lifecycle: SCMs generally lean towards static config change; they let you manage your changes through branching and testing. Data stores, on the other hand, support dynamic changes, since they let you quickly update values without requiring a deployment. The former is comparatively harder (e.g., it might require review, approval, or a formal release process). The latter is comparatively easier (e.g., direct update by a user or automated system).

It’s up to you to decide how to treat each case. If you make it too hard, you’ll be crushed by process (say, having to run a lengthy deployment exercise to experiment with a setting in production). If you make it too easy, you might cause instability in your services (e.g. a single engineer could bring down the site by changing a DB connection string with no checks or balances).

There are also unhappy ways to store configurations. It’s certainly also possible (and common, in some places) to store configurations directly on disk in an environment without source control. That’s bad! What if that disk goes south, or the machine gets repaved? That’s the whole point of source control! Configuration values are also sometimes stored transiently in memory. Except for cases that are truly temporary (like bumping up the logging level on a machine, to debug a problem), this is also ill-advised, for the same reasons.

Here are a few common questions to ask about how you store your configurations:

- Can you undo or rollback quickly and reliably (or even automatically) if a mistake is pushed to prod?

- Do you keep change history? Who changed this value, when, and for what reason?

- Do you allow branching/merging? Can people work in parallel by creating branches in an SCM, pushing their branch to a sandbox for testing, and only then merging their final changeset to production?

The general pattern you want to lean towards is version control (with a few exceptions).

Note that from the code’s point of view, this distinction might be transparent. The code is like a machine, and configuration values comes from one source or another, but it shouldn’t really care where. One common pattern for this is called “Dependency Injection”. The specific values can come from command line arguments, system properties, property files, or YAML files. These files can be checked in and bundled with the service, or they can be referenced externally.

Going even further, callouts to a Service Discovery service can be thought of as a “source of truth” for configurations, from the app’s perspective (functioning much like a database would). Internally, that service might treat its values as either versioned files or data; our app doesn’t know or care.

Scope: Where does this apply?

The same code can run in many different settings. It’s not enough to say what value goes with a given key; it could depend on where you’re running. Think about values more like a set of coordinates: for example, you might want the thread count for servers in one data center to be higher, because it contains more powerful machines. Or, you might choose a lightweight, non-durable form of storage in test environments, but use the real durable storage in prod.

I call this “logical location” the scope. There are three main classes of scoping agents:

- Physical Location: Where am I? That could include the name of the machine this code is running on, what data center it’s in, etc. This information is naturally hierarchical (machines are logically grouped into clusters, which are within data centers, etc).

- Hardware Platform: What am I? Settings could vary depending on your underlying hardware model, operating system, disk layout, etc.

- Mode: Who am I? Sometimes the same code can be run in different ways. You may have one codebase that can masquerade as different services depending on configurations, functioning as a high level “switch” that’s consulted at many places in the code.

Some configuration systems use different files for different scopes (e.g. one file for a Foo server and another for a Bar server; one for dev and another for prod; etc). These files are typically arranged in a hierarchy, going from general to specific; for any given property, the value you end up with comes from the most specific layer at which a value was given. For example, you might have one file called “default.properties” that every environment shares, which says “encrypted=false”; then, you may have another file called “production.properties” that is only applied in production and overrides this value to say “encrypted=true”.

Another way to do it is to combine the axes into a single file with explicit scoping:

<boolean name=”goFast”>

<default>false</default>

<case env=”prod”>true</case>

</boolean>

This can cut down on the confusion about where a value comes from (there’s only one possibility!), but the monolithic file means you must deploy changes for any environment out to every environment (not great).

To be clear, these “scoping values” can also be used directly as config values; e.g., you could have code like:

if (config.get(“environmentType”) == “test”)

do the test thing;

else

do the production thing;

There’s nothing wrong with this per se, but if you have a lot of copies of that same logic in your code, you may find it clearer to extract the location into a scope that the configuration system knows about.

Ownership: Who decides?

Regardless of which source of truth you store values in, there’s an even more fundamental question: who should actually decide what the “right” value is, at any given time? The answer could be any combination of:

- Coders. The author of the code may have supplied reasonable default values in some cases (ideally with good explanations). She or he may also have the prerogative to offer different values in different scopes (e.g., “you should use a higher thread pool size for a bigger machine”).

- Operators. An ops (or DevOps) team may have a tighter feedback loop around specific config values (e.g., changing the logging granularity during debugging.)

- Management. Sometimes the right call for a value isn’t a purely technical one. For example: weighing risk on enabling a new feature, or making a site switch.

- The real world. If configuration values are supposed to represent stuff in the real world (for example, server names), ideally it comes straight from what’s really out there (e.g., via Service Discovery).

- Software. Some pieces of configuration and metadata aren’t created or maintained by humans, but instead by some software process. (In that sense, they’re more like derived or cached values.)

- Security systems. Certificates, private keys, and other communication secrets may have a different source than other configs.

There’s no “right” answer, but you should be clear what the answer is for each kind of configuration you have.

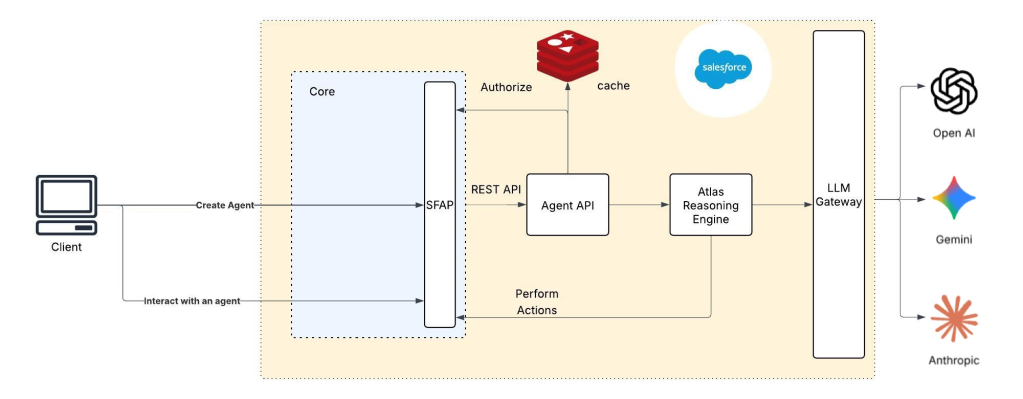

How Do We Configure Salesforce?

The most common kind of configuration in existence (at Salesforce, or anywhere else, for that matter) is simple “config” or “properties” files: plain text files (e.g. in XML, JSON, YAML, etc.) containing literal key/value entries for parameters and flags (like “max_threads=20” or “fast=true”). They are often “layered” to manage scope; you might have one file for the default configurations (say, things common to all environments), and then another layer that overrides a subset of those values (say, for your test machine, or a certain OS flavor). Most languages have built-in support for accessing the values at runtime (like Java .properties files).

While just about every service at Salesforce uses this type of text configuration, we’ve also got another specialized system that handles the complexity of our production environment. This system is based on structured files with a pre-defined set of axes, to control the scope of values:

- Environment — Is this development, test, or production?

- Sub-environment — Which copy of that environment (if there are multiple)? There’s only one “prod” but there are lots of parallel dev and test environments.

- Instance — Salesforce’s core infrastructure is sharded into many identical copies for scale (more on that way back in Episode 2, The Crystal Shard). Which instance(s) does this value apply to?

- Data Center — which data center(s) does this value apply in?

- Role — what functional machine type does this value apply to? Application server, a file server, etc.

These structured files generate Java accessor classes to view (but not change) the data at runtime. This allows engineers to access them in a standard, efficient way everywhere. And it allows us to keep a consistent, coherent view on a very large set of infrastructure deployments.

We’ve also got a variety of dynamic mechanisms for configuration, which I hope to cover in a future post (because they’re pretty cool). Naturally, various other parts of our infrastructure (like the networks) can have specialized ways that they’re configured too, ranging from static to dynamic.

But, in every case, the set of 5 axes above (effect, lifecycle, source of truth, scope, and ownership) is a great way to make sure that everyone is at least speaking the same language.

Got different ideas about configuration? Share them with us!

Big thanks to several folks who helped with today’s episode; in particular, Ben Zimmerman, David Murray, Hugo Haas and Artem Golubev.

Want more Architecture Files? Proceed to Episode #8 …