Every e-commerce application is going to need caching. For some of our customers, millions of shoppers may look at the same product information and, if you have to request that information from the service every time, your application will not scale. This is why we built the Commerce SDK with caching as a primary consideration from the start. Here we will discuss how to implement a custom caching solution based on what we learned, demonstrate how to move to a distributed cache, and explore what our customers will see when they start using our new Commerce SDK.

Commerce SDK out of the box

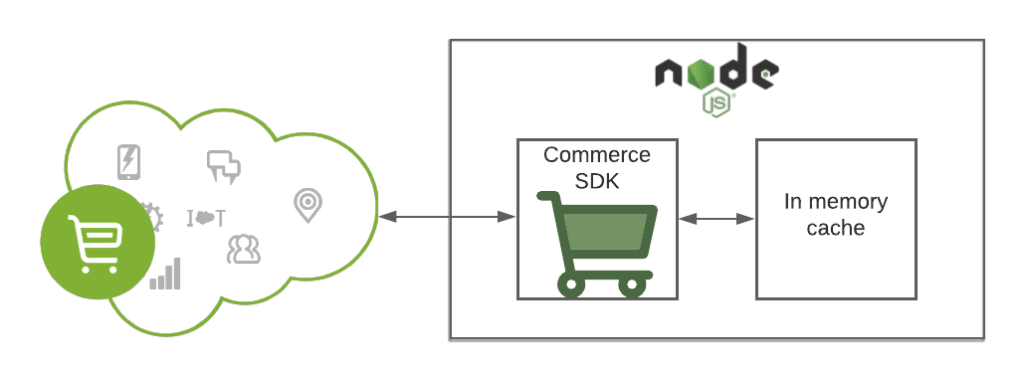

The Commerce SDK uses an in-memory cache by default with a limit of 10,000 items. It has an eviction policy of “least recently used,” meaning that we evict the least recently used response when you reach the limit. The caching behavior is determined by standard cache headers. Responses marked as “private” are not cached, and authorization headers are stripped before writing the response to the cache.

Let’s look at how things work when making a call for product details using the Commerce SDK.

// Create a new ShopperProduct API client

const productClient = new Product.ShopperProducts(config);// Get product details

const details = await productClient.getProduct({

parameters: {

id: "25591139M"

}

});

Note: for complete code examples, please see the commerce-sdk README)

If we inspect the Cache-Control header of the response, we see

public, must-revalidate, max-age=60

Responses may be marked as either public or private. Public responses should not contain any information specific to a requester and may be shared. Private responses should not be cached anywhere except the user’s browser. Since the response in this example is public it is written to our cache. Because of the max-age, if we make the same request again within 60 seconds, we will use the response from our cache without making any request to the server. If we try the same request after 60 seconds has passed, the cached response will not be used and the request will continue to the server.

Distributed Caching with Redis

Our simple default cache is, well, simple. It’s certainly adequate for development purposes and single instance applications. When we look at how it fits into a real-world application, the weaknesses begin to show. If we run our application as autoscaling containers, they would each have to maintain their own separate caches and manage the shared memory between the cache and the application, and the caches would disappear any time a container restarted. We provide a better way.

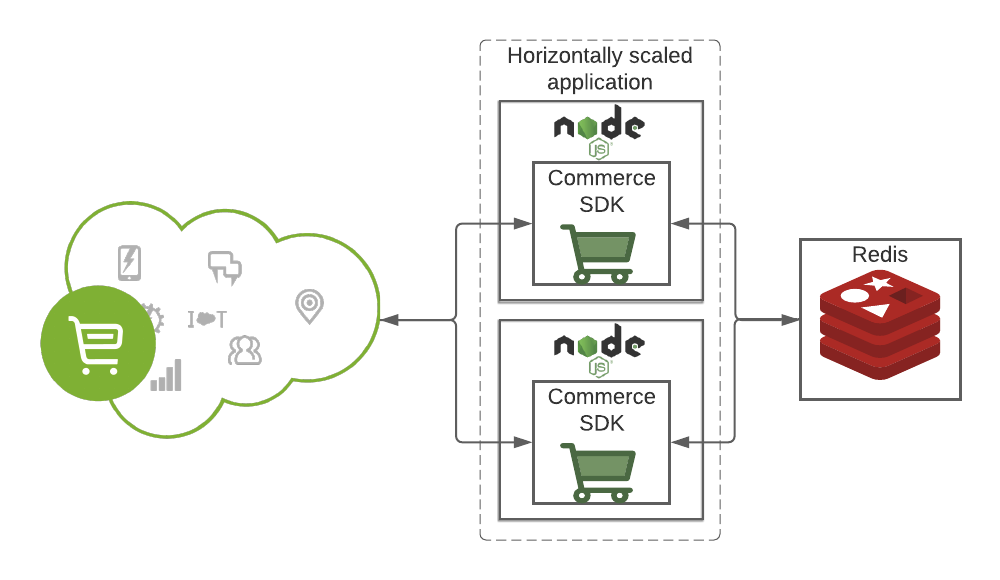

The Commerce SDK comes ready to connect to Redis by supplying your configuration info (also documented in the commerce-sdk README). This allows us to scale out our application horizontally while benefiting from a shared cache.

Bring your own Caching Solution

Both of the caching options we’ve discussed are implementations of the Cache API documented on MDN. The Commerce SDK will accept any implementation of that interface. A TypeScript definition of the interface can be imported with:

import { ICacheManager } from "@commerce-apps/core/dist/base/cacheManager";export class CacheManagerCustom<T> implements ICacheManager { ... }

Before we jump to an entire implementation from scratch, let’s break the cache manager into its two fundamental pieces: how to cache and where to cache. The cache manager implementations in the SDK separate the two concerns by using the pluggable Keyv storage interface. While only quick-lru and Redis have been tested in the Commerce SDK, you’re free to test out other supported Keyv backends. Maybe we can try Memcached with the script we were working on earlier?

We can get Memcached running locally with Docker, e.g.:

$ docker run -p11211:11211 --name memcached -d memcached

and then install the Keyv Memcache package:

$ npm install --save keyv-memcache

To configure it in our script:

import { CacheManagerKeyv } from '@commerce-apps/core';

const KeyvMemcache = require('keyv-memcache');

const memcache = new KeyvMemcache('user:pass@localhost:11211');const config = {

cacheManager: new CacheManagerKeyv({ keyvStore: memcache }),

...

}

Now when we run our script again, the output will look nearly the same, but we can look in memcached and see that the response was cached:

$ docker exec -it --user root memcached bash

...# apt-get update && apt-get install -y libmemcached-tools

...# memcdump --servers=localhostkeyv:keyv:request-cache-metadata:https://shortcode.api.commercecloud.salesforce.com/product/shopper-products/v1/organizations/orgid/products/25591139M?siteId=RefArch

keyv:keyv:request-cache:https://shortcode.api.commercecloud.salesforce.com/product/shopper-products/v1/organizations/orgid/products/25591139M?siteId=RefArch

Conclusion

This is just the tip of the iceberg, but you can see just how simple it is to utilize caching in a headless implementation of our commerce APIs utilizing our Commerce SDK. There is so much more you can read about this and what the Commerce SDK can do for you. To get started, just head over to our commerce sdk documentation!