Hello friends! It’s time for episode #8 of the Salesforce Architecture Files, a series where we talk about how we make the computers go.

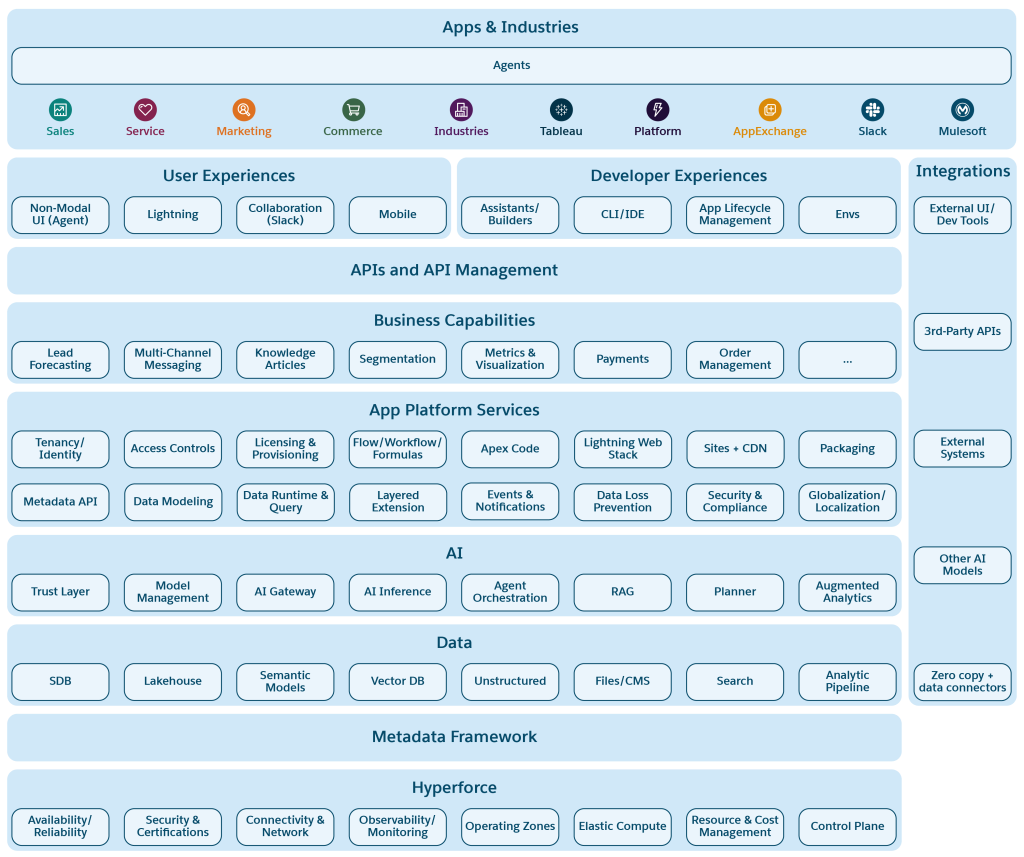

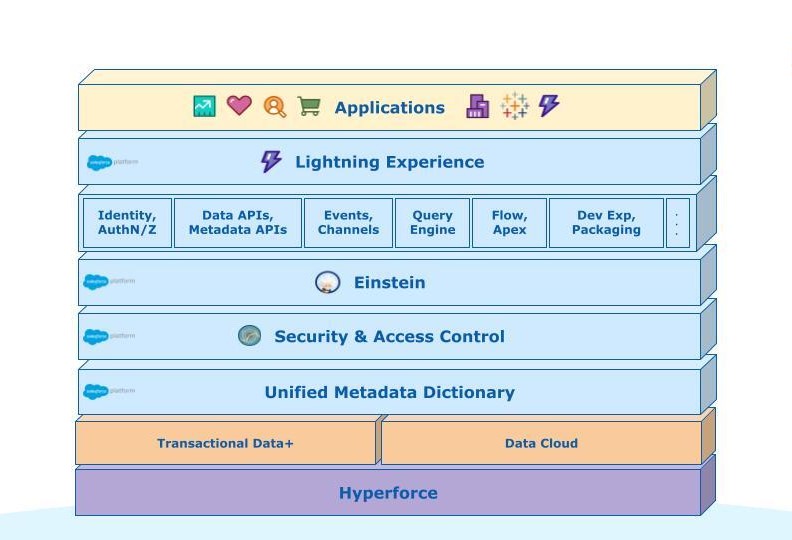

In the past few episodes, we’ve talked about a wide variety of topics: Sharding, Event Architectures, Databases, Transactions, Systems of Record, Configuration … whew! But as I reflected on all of this detail, it occurred to me that I’ve never really covered what the systems look like from a macro point of view. I’ve been talking about the trees, without ever giving you a view of the forest. So, let’s put that right.

You Are Here

In my room growing up, I had a poster on my wall. (OK, fine, I had a lot of posters, including at least one incredibly uncool Stryper poster; but that’s a topic for another day.) The poster I’m talking about was from National Geographic magazine; it was called “The Universe”, and you can see it here. Basically, it was a “map” of the universe, scaled into recognizable groupings: our solar system, our area of the galaxy, our whole galaxy, etc … on up to galactic superclusters.

What mesmerized me about this poster was the sense of vertigo—you could start with a scale you understood (the solar system) and keep zooming out with wider and wider lenses. No matter how vast each view seemed, it was really just a tiny speck in the context of something much bigger. I spent hours trying to wrap my brain around the impossible size of the universe.

What we have in software systems is nowhere near that mind-bending scale, obviously. But that is still a useful way to engage with it — to move in these concentric circles of scale, in order to situate yourself in understanding a complex system.

So, as Carl Sagan might say, let’s climb on board the ship of the imagination and give it a try.

Tenant

Let’s start with a typical day using a system like Salesforce. You get to work, get some coffee, check the price of Dogecoin, and then log in to your Salesforce account. When you do, you see a variety of things: reports of data you are interested in, messages from coworkers, recommendations for actions you should take, etc. — a variety of data that you might view, modify, and otherwise act on throughout your day.

In logging in, you’re situating yourself inside a single tenant. What’s a tenant? It’s a type of logical container (not a physical one, though we’ll talk about those below). In essence, a tenant is really just a group of users and some shared data and settings. Users of the system can interact with their shared pool of data, but not data owned by other tenants.

As a concept, a tenant-based system is sort of halfway between a system where every user has their own private data (like an email system or bank) and a system where all the users collaborate on shared data (like a social network, or Wikipedia). Users do share data with each other all right, but according to rules, within the bounds of their tenant. Data that belongs to other tenants might as well not exist — it’s invisible and untouchable.

Salesforce is a multitenant system, which means that the software itself is explicitly aware of tenants, and handles all the mechanics of making sure that every request, by every user, is scoped to the appropriate data. In fact, tenants are the primary abstraction of control and security in the system. Every one of the other layers we’ll talk about (below) assume that any work they’re doing is for exactly one of the many tenants that are coresident in the same instances of infrastructure and software.

Tenants are virtual — that is, based on unique identifiers, rather than physical workload separation. Despite that, however, they are no less “real” from a user point of view. In fact, many things that a software system would normally treat as universally shared — like, say, the schema of a database — are not actually shared. Nearly everything a human interacts with, be it data, code, or configuration, is all scoped to exist inside one tenant. That means that this behavior needs to be based on metadata, rather than hard-coded by our engineering team in a particular way.

Services

OK, so our user is happily logged in to a single tenant. Let’s say they start their day by taking a few actions, like searching for a record (“Mars Drilling Company”), updating a value (“Opportunity Value = $5B”), and reviewing the Einstein machine learning prediction about how likely this opportunity is to close (moved from 60% to 73%).

In that simple series of actions, the user is not just interacting with one single software program, they’re interacting with several independent ones. This brings us to the next stop on our trip outward: services.

A service is a running computer program that takes inputs in the form of requests from users (or other services), and returns useful output of one kind or another. It’s essentially a “daemon”, to use software lingo; it mostly just sits there waiting until someone has some work for it to do. That work could be anything, depending on what the service is written to do — answering a user request, rendering some UI, storing some data, etc.

Within Salesforce, there are many different services for specialized purposes. To take the examples above — a search query, a data update, or running a machine learning algorithm — each of those relies on separate services today:

- Search queries are handled by an instance of Apache Solr, which indexes new changes to data and makes them available for fast full-text searching.

- Record data is stored in a relational database (which, if you’ll recall, is one magical, transactional system of record).

- Machine learning is done in dedicated clusters that run software like R and Apache Spark (among many others).

And, these are just the tip of the ice giant; there are hundreds more discrete services, all of which have specialized purposes as part of preparing and servicing users’ requests.

It’s worth noting one special “uber” service, which also connects many others: the Core Application service (often referred to internally as just “the Core App”). For the many parts of Salesforce’s product suite (including Sales Cloud and Service Cloud), the core app is the center of a lot of what goes on. Physically, it’s made up of a pool of stateless servers, which broker nearly all the user requests made through the UI or API. The servers run Jetty, a Java application servlet container, and function to route work around to other services, and perform the core business logic of tenant data protection and metadata interpretation. (They also coordinate a few other parts of the system, like logging, debugging, configuration, and more.)

Is it a good pattern to have a service like this, that does so many different functions? It’s mixed, to be honest. There are some ways in which it makes our lives easier as engineers; like, for example, ensuring that the interpretation of tenant metadata is consistent, regardless of which subsystems are being connected. There are also ways in which it makes our lives a little more challenging, because so many different engineering teams are making changes to it simultaneously (so, there’s more to coordinate and test). We’ll talk at more length in a future episode about these challenges, and what we’re doing to evolve this part of the architecture.

But, that said, the Core App is still just one among many critical services. Data storage, search, caching, event transport, debugging, message queues, feature flagging, image processing, and many more—these all run as discrete services, connected in a big mesh of communication that ultimately presents the final outputs to user requests.

And, remember that this layer builds upon the multitenancy concept as well. Every request, with its fanout of calls to other services, is serving a particular user, in a particular tenant. That means, regardless of what service you’re talking to, the request typically has a little work to do up front to establish context — what’s the current user and tenant, what kind of rights do they have, etc. For every system, if this context isn’t properly established, the service will typically reject the request (as it should).

Instances

Onward! As we zoom out, you can see that this suite of interacting services is a bit like a solar system … and it’s not the only one, there are neighboring solar systems too. These are what we call instances.

What’s an instance? Put simply, it’s a (mostly) independent set of all the running services you might need to run some part of Salesforce. (What do I mean by “some part of Salesforce”? Hold that question, we’ll cover that next.) As an example, for Sales & Service cloud, there are around 100 instances, each of which centers on one relational database and has its own set of independent running services for cache, feature flagging, and many of the other examples I gave above.

Why do we do this? Why not just have one big soup of services? There are a number of reasons. For one thing, as I explained early on in this series, part of our approach to scalability is to shard our systems by customer. Breaking into multiple instances is how we do that. There are other reasons, too; breaking into multiple instances allows for better fault isolation, and to some degree, it also offers us the ability to decrease network latency for customers in some geographical regions. (For example, our many customers in Japan primarily use an instance that’s physically located in Japan, so time spent going over the network is minimized.)

Now, it’s worth noting this level of “zoom” isn’t completely uniform. There are some services which actually serve multiple instances, because by their nature they are meant to scale horizontally, and “bigger is better”. One example of this is Apache HBase, a distributed storage system we use in addition to our relational database. These HBase clusters are also broken into multiple instances (rather than one big global instance), but the granule size for these services is larger. (You’ll sometimes hear us refer to the services that run at this level as serving “instance groups”.)

You can see the different instances that Salesforce runs by taking a look at our Salesforce status web site, which gives full transparency into the array of instances we have, and their uptime and reliability.

Properties

Now we’re getting to the point of zooming way out. Not only are there galaxies, but there are clusters of galaxies! And superclusters! Billions and billions of them!!

OK, not really — I think I’ve strained the space metaphor to the point of breaking. But, there’s one more level that’s useful to talk and think about in Salesforce’s overall architecture, and that’s what we refer to as a property.

A property is a distinct architecture for processing some broad type of user interaction. This is sort of aligned to the different product lines we sell (Sales cloud, Marketing cloud, Commerce cloud, etc.) but not perfectly, as some of those product lines do actually run as part of the same property (Sales cloud and Service cloud, for example). You can see the list of different properties on our Trust website as well.

Why do we have different properties at all? Some of the reasons are historical, and some are architectural. The historical ones are probably obvious — Salesforce has grown both by ongoing organic development, as well as via some acquisitions of other companies, like ExactTarget and Heroku. But the architectural reasons are (IMO) more interesting.

What’s an example of an architectural reason? The real-time, relational database-backed magic of the Sales cloud and Service cloud platform is extremely powerful, because it gives business users the ability to adapt their metadata at any time, and see a real time view of all their data, with fine-grained data access control capabilities that can be modified on the fly. This is powerful, but it isn’t necessarily the right way to architect consumer-facing commerce sites or marketing messages, where interactions number in the billions per day, and an extremely low latency response is critical to consumer engagement. Our ability to cache data, move it to the edge of the network, and degrade gracefully when needed for uptime, all differ in these types of use cases.

As such, having these different properties allow us to make different engineering tradeoffs for different purposes. It’s a constantly changing equation for us to balance, but nearly all of that happens “behind the curtain”, because what users interact with is …

The Whole System

Now, when we step back and look at the entire picture, what’s actually important is that from the outside, Salesforce is one system. Our customers can purchase licenses to different product lines, but when they do that, they can use a common login identity, interact with common customer support, get awesome docs with Trailhead, and lots more. And, naturally, many of our technological support systems also span across everything — for example, our world class approach to site reliability, customer communication, and incident response.

(It’s worth noting that since Salesforce acquires new products regularly, there’s always some latency with integrating things, so if the product you’re looking at isn’t fully integrated yet, give it a little time!)

When we think about software architecture, that’s where we spend a lot of our effort: how do we continue make this large, distributed system with many moving parts all function as one seamless user experience? How do we make sure that each of our software products is individually the best in its class, while also making sure that if you use more than one of them, you’re getting multiplied benefits? We’ll talk more about how we approach this, as the Architecture Files continue.

By the way … if you squint, you can make one more level of “zoom out”; our systems (and everyone else’s) are part of the Internet, a single connected network of computer systems. In a way, you can think of all of human achievement as one big distributed system. ALL IS ONE!

So there you have it — the universal map of Salesforce, from big to bigger to biggest.

Got questions about any parts of this? Let’s talk about it!

Ps — If you’d like to hear more detail about how things hang together, and you’re in San Francisco for TrailheaDX, I’ll be giving a talk that includes this information, as well as much more. Come see me!

Follow us on Twitter: @SalesforceEng

Want to work with us? Salesforce Eng Jobs