By Anchal Nigam, Ashish Gite, and Kumar Kasimala.

In our Engineering Energizers Q&A series, we highlight the engineering minds driving innovation across Salesforce. Today, we spotlight Anchal Nigam, a Senior Software Engineer at Salesforce, who engineers new capabilities in Prompt Builder that ground enterprise AI prompts with live external data and verifiable citations, enabling prompts to combine enterprise data with real-time external information.

Explore how the team extended Prompt Builder to ground AI prompts with external web retrieval for workflows requiring information beyond internal CRM datasets and engineered citation architecture that allows users to verify AI-generated responses against original sources to reduce hallucination risk.

What is your team’s mission as it relates to expanding Prompt Builder with web retrieval and citation capabilities for AI prompts?

Our team makes enterprise AI systems useful and trustworthy by ensuring prompts incorporate the right data and generated responses remain verifiable. In many organizations, AI responses influence real business decisions, so the system delivers both intelligent outputs and transparency regarding information sources. We designed Prompt Builder to help prompts safely access broader sources of information while maintaining the reliability, safety, and traceability required for enterprise workloads.

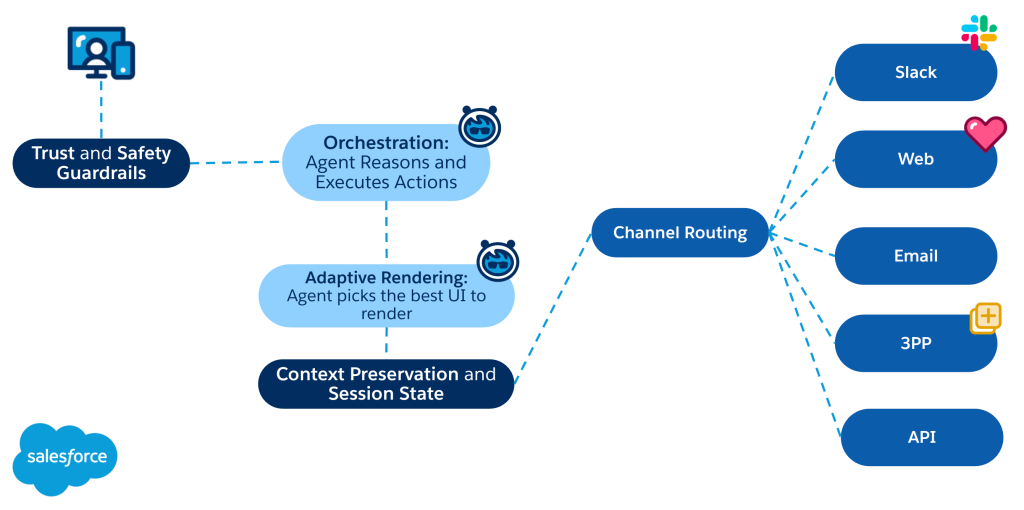

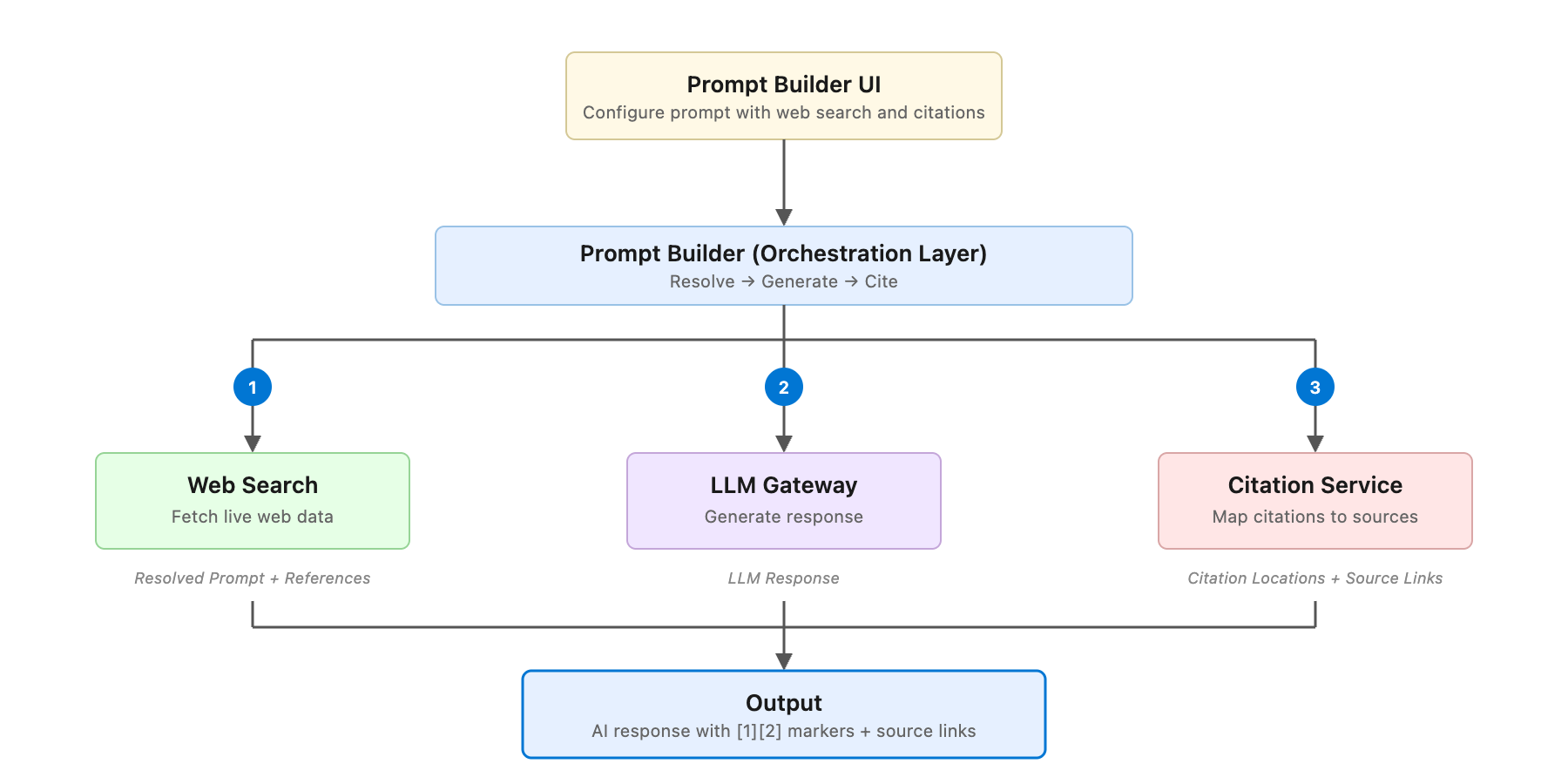

Prompt Builder enables teams to create reusable prompt templates that dynamically incorporate context from multiple data providers before sending the resolved prompt to a large language model. We expanded this system by building two capabilities directly into the platform. Web Retrieval allows prompts to incorporate live external information when use cases require context beyond Salesforce data.

Additionally, citation capabilities allow generated responses to be traced back to the original sources that informed them. Together, these capabilities allow Prompt Builder to produce responses grounded in both enterprise data and external information while giving users visibility into the sources behind the generated output.

What problem does Prompt Builder solve when grounding AI prompts with data beyond the Salesforce ecosystem?

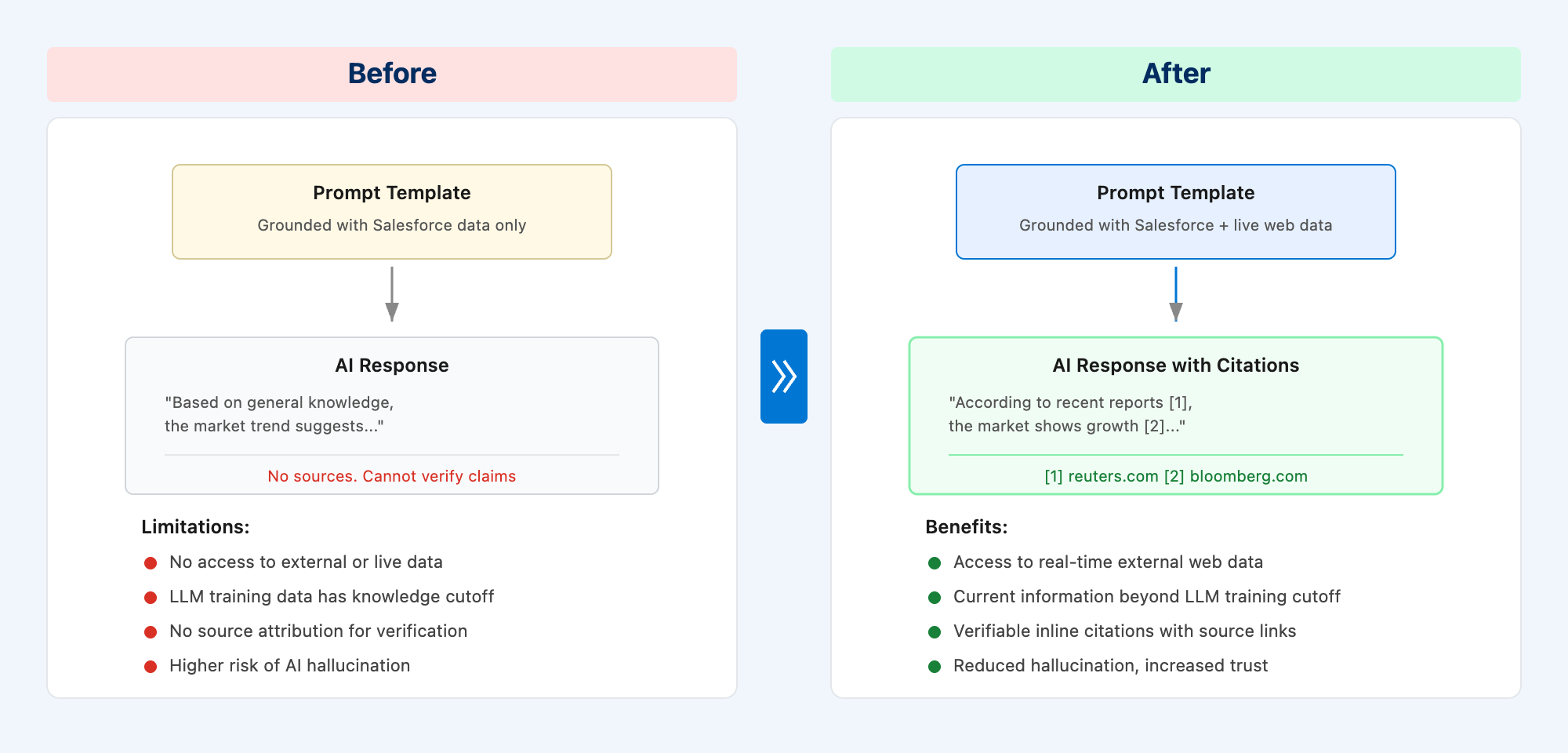

One of the biggest limitations of large language models (LLMs) involves their operation with a fixed training cutoff. If a prompt relies only on the model’s training data, the response may lack the most current or relevant information. Before web retrieval capabilities existed, Prompt Builder grounded prompts using Salesforce data through dynamic placeholders, yet prompts remained limited to internal CRM context.

For many enterprise workflows, this limitation becomes a critical challenge. Sales teams need competitor intelligence, support teams rely on evolving regulatory guidance, and financial analysts depend on real-time market signals. When prompts cannot access information beyond internal systems, the model generates answers without the full context required to produce reliable responses.

We engineered Prompt Builder’s web retrieval capability to close this gap by allowing AI prompts to incorporate live external information before the request reaches the LLM. The system retrieves relevant external content and inserts it into the resolved prompt so the model generates responses using both enterprise data and real-world context.

By building web retrieval directly into the prompt resolution pipeline, we enabled prompts to combine internal Salesforce data with live external knowledge inside a single workflow. Developing this capability required designing a web retrieval architecture that integrates external providers while maintaining enterprise reliability.

Extending prompts with live web data and citations.

What engineering considerations shaped your approach to enabling AI prompts in Prompt Builder to incorporate external retrieval?

We designed the web retrieval architecture for Prompt Builder to integrate external providers while maintaining reliability, safety, and ease of use. Several engineering considerations shaped the system:

- Usability: Users add web retrieval to prompts without complex configuration or coding.

- Reliability: The system handles latency and timeouts from external providers without disrupting prompt execution.

- Flexibility: Users can select from multiple search providers based on their use case, allowing teams to optimize for quality, cost, or coverage

- Safety: Filtering layers prevent unsafe content from entering the AI response pipeline.

To support these goals, we built Prompt Builder to orchestrate web retrieval multiple alongside existing data providers while preserving a stable prompt resolution pipeline. These architectural decisions allow the system to incorporate external information while maintaining the reliability expected for enterprise AI systems operating at large scale.

What architectural challenges arose when ensuring AI responses generated through Prompt Builder were trustworthy and not hallucinated?

Trust remains a central concern for enterprise AI systems because models often generate confident responses that contain inaccurate information. Users cannot determine if a response is reliable without visibility into the origin of the data.

To solve this, we designed a citation architecture that links responses to their specific sources. When Prompt Builder resolves prompts using web retrieval, the system collects reference metadata for every piece of information.

We implemented a citation service to process the response and the metadata together. This service identifies where citations belong and inserts markers linking snippets to original sources.

The final response includes clickable citations so users trace claims back to the original source material. This traceability increases confidence in AI outputs and allows organizations to use AI safely within enterprise workflows.

High-level overview of web retrieval and citation flow.

What security challenges did you face when rendering citations inside Prompt Builder responses?

Rendering citations within AI responses introduces security risks because the system inserts dynamic markers into browser content. Improper implementation could expose applications to cross-site scripting vulnerabilities.

We mitigate these risks using several safeguards in our rendering pipeline:

• Markdown formatting replaces raw HTML injection for marker insertion

• Strict sanitization rules validate tags and attributes before rendering

• Protocol validation limits links to secure HTTPS connections

• Filtering processes dynamic content before browser display

These protections allow for interactive citations while preventing security exploits.

What performance tradeoffs shaped your approach to inserting citations efficiently within Prompt Builder responses?

Efficient citation insertion requires a balance between accuracy and speed for long responses. Our system generates claim-based references for text snippets and position-based references for character offsets.

The claim-based method scans the entire response to find snippets, which slows down processing as text length increases. We solve this by using a position-based strategy that places markers at specific offsets.

To prevent marker insertion from shifting the character count, the system processes citations in reverse order. Starting from the end of the response preserves the accuracy of earlier positions and maintains high performance.

Learn more

- Stay connected — join our Talent Community!

- Check out our Technology and Product teams to learn how you can get involved.